RAG vs Fine-Tuning for LLMs (2026): Production Guide

RAG vs fine-tuning in 2026 explained with real tradeoffs, latest trends, and a practical decision framework for production LLM systems.

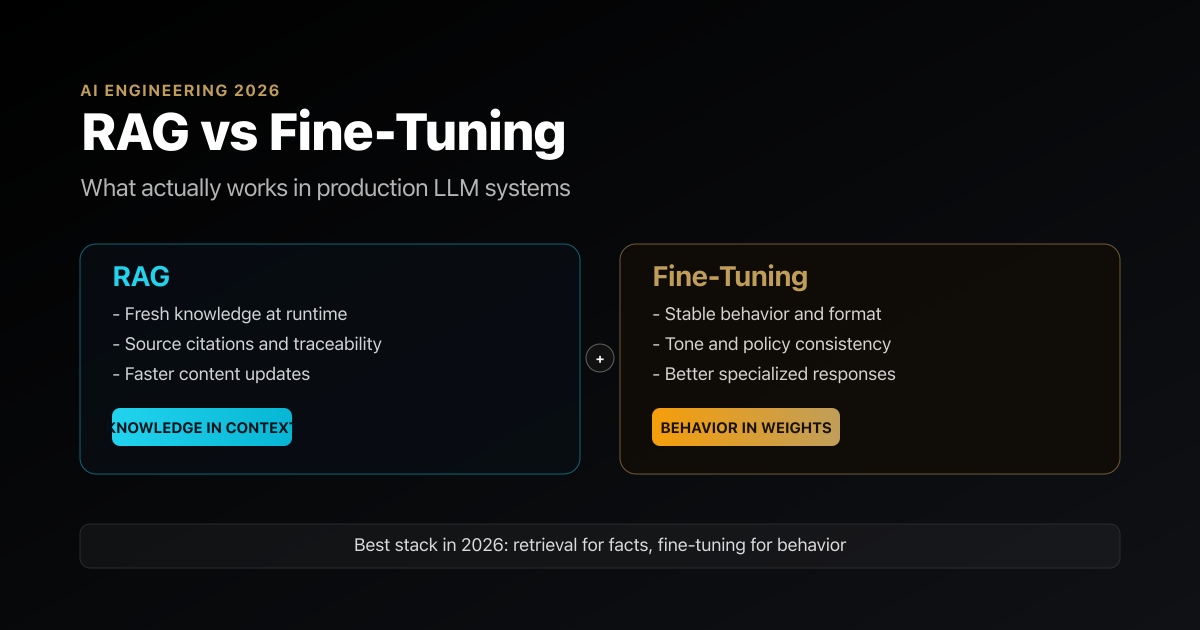

TL;DR

- RAG is still the default for fast-changing knowledge, citations, and compliance-heavy use cases.

- Fine-tuning is for behavior, not your constantly changing knowledge base.

- Long context did not kill RAG; recent benchmarks show there is no universal winner.

- Best 2026 pattern is hybrid: retrieval for facts, fine-tuning for style, policy, and decision behavior.

- If your knowledge base is small enough, you can often skip RAG and use full-context + prompt caching first.

Introduction

Most teams still ask the wrong question: “Should we use RAG or fine-tuning?”

In 2026, that framing is outdated.

You are not choosing one forever. You are designing where your intelligence lives: in model weights, in external knowledge, or both. Teams that get this right ship reliable AI products. Teams that get it wrong burn months on expensive training runs that should have been a retrieval pipeline.

The short answer is this: put volatile knowledge in retrieval, put stable behavior in fine-tuning, and stop trying to force one tool to do both jobs.

What Is RAG vs Fine-Tuning?

Retrieval-Augmented Generation (RAG) means your LLM pulls relevant chunks from an external knowledge source at runtime and uses them as context before generating an answer.

Fine-tuning means updating model parameters so the model internalizes task behavior, style, or domain patterns.

Think of it this way:

- RAG changes what the model can see right now.

- Fine-tuning changes how the model tends to behave every time.

That distinction is the single most useful mental model for architecture decisions.

DECISION LENS

Most architecture mistakes happen because teams try to store both behavior and changing knowledge in the same place.

RAG

Changes what the model can see

Retrieval is best when the facts are volatile, private, or need traceability.

- Fresh knowledge without retraining

- Better citations and auditability

- Fits policy, support, and docs-heavy systems

FINE-TUNING

Changes how the model behaves

Fine-tuning is best when the job is consistency, formatting, routing, or product-specific decision behavior.

- Better tone and structure control

- Useful for classifiers and structured outputs

- Not the right place for frequently changing facts

LONG CONTEXT

Helpful, but not a silver bullet

Bigger context windows reduce some retrieval overhead, but they do not automatically solve ranking, salience, or noise problems.

- Good for smaller knowledge bases

- Still requires evaluation

- Not a universal RAG replacement

PRODUCTION DEFAULT

Hybrid is the modern answer

Use retrieval for truth and freshness, then use tuning for behavior, policy, and format reliability.

- Most serious systems end up composable

- Cleaner separation of concerns

- Easier to evolve over time

Why This Matters More in 2026

LLM systems moved from demos to audited production workflows. That changed the bar:

- You need traceability (where did this answer come from?).

- You need fast iteration (update docs today, not retrain next month).

- You need predictable cost/latency at scale.

At the same time, fine-tuning got better and more practical. OpenAI expanded fine-tuning controls, validation metrics, and workflow tooling, and added multimodal (vision) fine-tuning support. So yes, fine-tuning is more usable now than it was in 2023.

But the biggest trend is not “RAG is dead” or “fine-tuning is dead.”

The biggest trend is composable adaptation stacks.

The 2026 Deep Dive: What Changed Recently

1) Retrieval quality improved a lot

The weak point in many RAG systems was retrieval quality, not generation.

Anthropic’s Contextual Retrieval work showed sizable gains in retrieval quality, including a 49% reduction in failed retrievals, and 67% with reranking in their experiments. That is not a small optimization; that is the difference between “hallucinates sometimes” and “trustworthy enough for customer-facing flows.”

2) Small knowledge bases no longer need full RAG pipelines

Another practical shift: if your total knowledge fits comfortably in context windows, you may not need RAG at all.

Anthropic explicitly notes that for knowledge bases under roughly 200,000 tokens, full-context prompting plus prompt caching can be faster and cheaper than building retrieval infra. This is a major architecture simplifier for internal copilots and docs assistants.

3) Long context vs RAG is not settled

The “just use long context” crowd is too confident.

The 2025 LaRA benchmark (ICML/PMLR) found no silver bullet: the better choice depends on task type, model behavior, context length, and retrieval setup. Translation: if you’re making architecture decisions from one viral benchmark thread, you’re gambling with your roadmap.

4) Fine-tuning matured beyond naive SFT

Fine-tuning is no longer just “upload JSONL and hope.” Teams now use PEFT methods (LoRA/QLoRA families), stronger eval loops, and in some stacks even reinforcement-style fine-tuning for reasoning behavior.

This makes fine-tuning much more attractive for consistency, tone control, classification behavior, structured outputs, and policy adherence.

RAG vs Fine-Tuning: Side-by-Side

| Dimension | RAG | Fine-Tuning |

|---|---|---|

| Best for | Frequently changing facts, private docs, citations | Stable behavior, style, decision policies, structured outputs |

| Knowledge freshness | Excellent (update index, no retrain) | Poor for fast-changing data (requires retraining) |

| Explainability | High (source chunks/citations) | Lower (knowledge buried in weights) |

| Time to first value | Fast | Medium to slow (data prep, training, eval) |

| Runtime latency | Can be higher (retrieval + rerank + generation) | Can be lower for specific tasks |

| Operational complexity | Retrieval infra + indexing + eval | Training pipeline + data governance + eval |

| Failure mode | Bad retrieval -> bad answers | Overfit / drift / stale embedded knowledge |

| Cost profile | Ongoing inference + retrieval cost | Upfront training + lower per-request in some workloads |

WHEN EACH APPROACH WINS

The practical choice becomes much clearer when you separate knowledge problems from behavior problems.

RAG-FIRST

Use retrieval when facts change or provenance matters

RAG should usually be your first move for knowledge-heavy systems because it preserves freshness and explainability.

- Support bots over changing docs and policies

- Compliance workflows that need source grounding

- Private knowledge bases that update constantly

- Any product where citations or evidence matter

TUNE WHEN

Use fine-tuning when behavior is the bottleneck

Fine-tuning becomes valuable when the core problem is not missing facts, but inconsistent or expensive model behavior.

- Strict output formats and schema adherence

- Stable tone and style requirements

- Routing, classification, and policy behavior

- High-volume narrow tasks where latency matters

Opinionated Decision Framework

If you’re building today, use this sequence:

Start with prompting + evals.

If you skip evals, every architecture debate is just vibes.Add RAG before fine-tuning for knowledge-heavy tasks.

Especially for docs QA, support agents, policy lookup, and regulated workflows.Fine-tune when behavior is the bottleneck, not missing facts.

Example: output format compliance, tone consistency, routing/classification, or domain-specific response style.Go hybrid for serious products.

Retrieval handles freshness and provenance. Fine-tuning enforces behavior and consistency.

💡 Key insight: Your model should “learn how to think in your product,” but it should still “look up what changed yesterday.”

Common Mistakes Teams Keep Repeating

Mistake 1: Fine-tuning to inject dynamic facts

If your data changes weekly, fine-tuning it into weights is self-inflicted pain. Use retrieval.

Mistake 2: Shipping RAG without retrieval evals

Many teams evaluate final answer quality but never measure retrieval hit rate, chunk relevance, or reranker impact. That’s like debugging a compiler by staring at app screenshots.

Mistake 3: Ignoring chunking and metadata strategy

RAG quality is often won or lost before inference starts: chunk boundaries, overlap, metadata, and indexing strategy matter more than model brand selection.

Mistake 4: Treating long-context as architecture magic

Long context helps, but it does not remove ranking, salience, or noise problems. Bigger context windows are not a substitute for retrieval discipline.

Best Practices for 2026

- Adopt a “RAG-first, tune-second” default for knowledge applications.

- Implement hybrid retrieval (semantic + lexical/BM25) plus reranking where quality matters.

- Track two eval layers: retrieval metrics and answer metrics.

- Fine-tune for constrained behaviors (format, style, classification, tool use policy), not for constantly changing facts.

- Use PEFT methods first unless you have a clear reason for full-model tuning.

- Design for reversibility: you should be able to swap embedding model, reranker, or tuned head without rewriting your whole stack.

Real-World Architecture Pattern

A practical production pattern looks like this:

- User query enters intent router.

- Router chooses lookup-heavy path (RAG) or behavior-heavy path (tuned model).

- RAG path: retrieve -> rerank -> grounded generation with citations.

- Tuned path: low-latency specialized generation.

- Shared safety, policy, and eval layer logs both retrieval and output quality.

This architecture avoids the false binary and gives you room to evolve.

FAQ

Is RAG better than fine-tuning in 2026?

For knowledge freshness and citations, yes. For stable behavior control, no. The winner depends on the job, and most serious systems use both.

Does long context replace RAG?

Not universally. Recent benchmarks show performance depends on task and setup. Long context is powerful, but not an automatic replacement for retrieval pipelines.

When should I fine-tune instead of using RAG?

Fine-tune when your failure mode is behavior inconsistency: wrong format, unstable tone, weak classification, or poor policy adherence. If failures come from missing/stale facts, use RAG.

Is fine-tuning cheaper than RAG?

It can be, for high-volume narrow tasks after training cost is amortized. But for rapidly changing knowledge domains, RAG usually wins on maintenance and freshness.

Can I combine RAG and fine-tuning?

You should. In 2026, hybrid systems are the practical default for production-grade quality.

Conclusion

The RAG vs fine-tuning debate is mostly noise now.

The real question is where to place knowledge, where to encode behavior, and how to evaluate both continuously. If you remember one line, remember this: RAG keeps your system truthful today; fine-tuning makes it consistent tomorrow.

Build with both, but use each for its actual job.

If you found this useful, next you should read about evaluation design for LLM systems, because architecture without evals is guesswork at scale.

[INTERNAL LINK: LLM evaluation framework → Evals for production AI systems][INTERNAL LINK: vector databases and chunking strategy → RAG implementation guide]

[INTERNAL LINK: prompt engineering to production workflows → Prompt engineering best practices]

[EXTERNAL LINK: OpenAI fine-tuning improvements (Apr 2024) → https://openai.com/index/introducing-improvements-to-the-fine-tuning-api-and-expanding-our-custom-models-program][EXTERNAL LINK: Anthropic Contextual Retrieval (Sep 2024) → https://www.anthropic.com/engineering/contextual-retrieval]

[EXTERNAL LINK: LaRA benchmark (ICML 2025) → https://proceedings.mlr.press/v267/li25dv.html]

Written for umesh-malik.com - no-fluff technical writing on AI, Web Dev, and Engineering.

Written by Umesh Malik

AI Engineer & Software Developer. Building GenAI applications, LLM-powered products, and scalable systems.