Claude Code Leak 2026: What Escaped, What Stayed Locked, and the Copyright Irony No One Is Talking About

A clear, action-focused breakdown of the March 31, 2026 Claude Code source-map leak: what was exposed, what was not, Anthropic DMCA sweep that hit their own repos, clean-room reimplementations, and the uncomfortable copyright parallels.

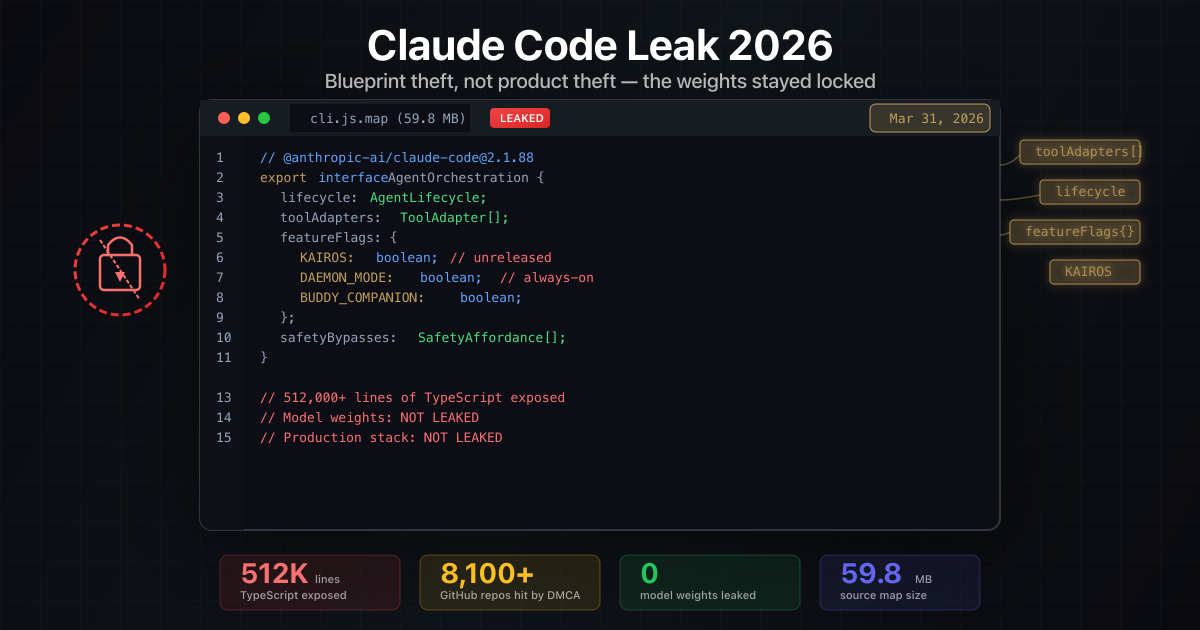

The Claude Code source-map leak on March 31, 2026 was not a Hollywood breach. It was a mundane packaging mistake that briefly put ~512k lines of TypeScript orchestration logic on the public internet. Within hours, the repo topped GitHub’s trending charts and Anthropic fired off DMCA notices - accidentally hitting forks of their own official repository in the process.

The model weights and customer data never left the vault, but the architectural blueprint did. And the internet’s reaction? Let’s just say developers had opinions about what they found inside.

Leak at a glance

512k

Lines of TypeScript exposed

via npm source map 2.1.88

8,100+

GitHub repos hit by DMCA

scope later narrowed after backlash

0

Model weights leaked

capabilities remain hosted

~$2.5B

Claude Code ARR

built on "vibe coded" foundation

WHY THIS LEAK MATTERS

The incident reveals more about the AI industry than the code itself.

THE CODE

Developers called it "vibe coded garbage"

Many observers noted the codebase appeared rapidly developed without traditional review practices - yet it powers a $2.5B ARR product.

- Fast iteration over polish

- Product-market fit trumps code quality

- The real moat is the model, not the harness

THE DMCA

Anthropic accidentally DMCA'd their own repos

In the rush to contain the leak, Anthropic issued takedowns against forks of their official claude-code repository containing their own public examples.

- 8,100+ repos initially targeted

- Scope narrowed after developer backlash

- TechCrunch: "an accident"

THE IRONY

Clean-room reimplementations emerged immediately

Developers rewrote Claude Code in Python and Rust, arguing the same fair-use logic AI companies use for training data.

- Anthropic getting "a taste of their own medicine"

- Copyright frameworks unresolved

- Transformation vs. derivation debate

THE REALITY

The code was never the competitive advantage

Other AI coding tools (Codex, Gemini CLI) are already open source. Claude Code's value is the seamless model integration.

- Orchestration is commodity

- Model quality is the moat

- User experience is the product

The fast version (TL;DR)

- An npm publish of

@anthropic-ai/claude-code@2.1.88accidentally shipped a massivecli.js.map, exposing the full CLI/agent orchestration codebase. - The leak gives competitors architectural insight and reveals unreleased toggles (like always-on daemon mode, KAIROS flags, and a “buddy” Tamagotchi-like companion experiment). It does not give anyone Claude’s model weights, safety data, or hosted inference stack.

- Developers roasted the code quality online - calling it “vibe coded garbage” - but the $2.5B ARR product proves that product-market fit beats code polish.

- Anthropic yanked the bad package, issued DMCA notices that briefly overreached (hitting their own repos), and is rotating internal keys plus tightening pre-publish checks.

- Clean-room reimplementations in Python and Rust appeared within 48 hours, sparking debates about AI copyright that mirror the industry’s own training data controversies.

- For teams: clear caches of 2.1.88, upgrade, document removal for audit, and avoid touching leaked repos to stay clear of copyright and CFAA trouble.

What actually leaked vs. what did not

Leaked

- TypeScript orchestration for Claude Code’s CLI, tool adapters, agent lifecycle, and feature flags.

- Internal naming and roadmap hints (e.g.,

KAIROS,daemon, “buddy” Tamagotchi-like companion experiments). - Safety-bypass affordances visible in code paths that handle prompt and tool execution order.

Not leaked

- Claude model weights, safety datasets, or training recipes.

- Production API keys or customer artifacts.

- Hosted inference stack and scaling primitives that make Claude Code performant in production.

What developers found (and what they said about it)

The code quality debate became almost as viral as the leak itself. Within hours of mirrors appearing, developers were dissecting the codebase and sharing their takes:

“Vibe coded garbage that’s making $2.5B ARR. The state of software in 2026.”

“This is what happens when you ship fast and iterate. It works. The code does not have to be beautiful.”

“I’ve seen worse in production at Fortune 500s. At least this actually works.”

The reactions split into two camps:

Camp 1: “This proves code quality does not matter”

- The codebase appeared rapidly developed, with shortcuts and patterns that would not pass a traditional code review

- Yet Claude Code captured ~$2.5B in annualized recurring revenue in under a year

- The lesson: product-market fit and user experience trump architectural purity

Camp 2: “This is exactly why AI-generated code is concerning”

- Critics argued the codebase reflected the output of AI-assisted development pushed too fast

- The leaked source showed patterns consistent with LLM-generated code that was accepted without thorough review

- The counter-argument: does it matter if it works and ships?

The DMCA chaos: when Anthropic accidentally took down their own repos

Anthropic’s response was swift - perhaps too swift. According to TechCrunch, the company “took down thousands of GitHub repos trying to yank its leaked source code,” which they later characterized as “an accident.”

What went wrong:

- Anthropic issued broad DMCA takedown requests targeting any repository containing Claude Code patterns

- The net caught forks of their own official

github.com/anthropics/claude-coderepository - Legitimate open-source contributions, examples, and tutorials were temporarily nuked

- Developer backlash forced Anthropic to narrow the scope

The scale:

- Initial sweep: ~8,100 repositories flagged

- After correction: Focus narrowed to repos containing actual leaked source map content

- Collateral damage: Unknown number of legitimate projects temporarily affected

The irony: Anthropic, a company that has been sued for training on copyrighted content, aggressively pursued copyright enforcement against developers who may have been doing nothing more than forking their public repository.

Timeline you can brief leadership with

-

Mar 31, 2026 - 04:00 ET

Bad build goes live

npm package 2.1.88 publishes with giant source map exposing full TypeScript code.

-

Mar 31, 2026 - Morning

Mirrors explode on GitHub

Repo hits trending; forks and zips circulate before removal.

-

Apr 1, 2026

DMCA sweep overshoots

Anthropic requests takedown of ~8,100 repos; scope later narrowed after developer backlash.

-

Apr 1-2, 2026

Fixed build + key rotation

Patched package replaces 2.1.88; internal secrets rotated; publishing checks hardened.

The copyright irony nobody wants to talk about

Here is where the story gets uncomfortable. Within 48 hours of the leak, “clean-room implementations” of Claude Code started appearing - developers rewrote the functionality from scratch in Python and Rust, using the leaked code as a reference for architecture but not copying it directly.

Their argument? The same one AI companies use to justify training on copyrighted content:

“Using AI to rewrite content does not constitute derivative work. This is how learning works.”

THE COPYRIGHT PARALLEL

The leak surfaced an uncomfortable mirror between AI training practices and code 'theft.'

ANTHROPIC'S TRAINING

What AI companies argue about training data

- Training on publicly available content is transformative fair use

- We learn patterns and general knowledge, not memorize

- This is how human learning works

- The output is new, not copied

CLEAN-ROOM CLAUDE

What developers argue about reimplementations

- Using leaked code as reference to write new code is transformative

- We learned the architecture, not copied the implementation

- This is how reverse engineering works

- The new code is original work

The debate:

- Anthropic has been sued for training on copyrighted books, articles, and code without permission

- Anthropic argues this is “transformative fair use” and “how learning works”

- Developers now use the same argument to justify clean-room reimplementations of Claude Code

- Critics call it “Anthropic getting a taste of their own medicine”

The legal reality:

- Violating API ToS through fraudulent accounts is clearer legal ground than training data questions

- But the clean-room reimplementers are not using fraudulent accounts - they are rewriting from public observation

- The frameworks for both situations remain unsettled and actively litigated

How the leak changes the game (even without weights)

- Faster Claude-like clones - Open-model teams can mirror the orchestration pattern with their own models, compressing their time-to-market for developer agents.

- Better red-team playbooks - Seeing how Claude Code sequences tools and guards prompts gives attackers a richer map for prompt-injection and tool-escape tests.

- Enterprise procurement friction - Security and legal teams will now ask for stronger SBOMs, pre-publish gates, and attestation from any agent toolchain vendor, not just Anthropic.

- Legal chill for builders - Using the leaked code directly risks DMCA/CFAA exposure; clean-room reimplementation or open alternatives (e.g., bespoke SvelteKit/Vite agents) are safer paths.

- Architectural commoditization - The leak confirms that agent harnesses are largely interchangeable; the model is the moat.

What to do if you run Claude Code (or ship agents like it)

- Purge and upgrade: Delete caches and lockfiles pointing to

@anthropic-ai/claude-code@2.1.88; install the latest fixed release. - Rotate anyway: Even though no secrets leaked, rotate CLI tokens and workstation credentials as a hygiene move.

- Gate your own publishes: Add CI checks that block source maps or unusually large artifacts from going to npm/registries.

- Document removal: Keep an audit trail (ticket + commit) noting removal of the leaked artifact to prove non-use in case of legal scrutiny.

- Monitor copycats: Set GitHub/npm alerts for packages mimicking Claude Code behaviors; add detection rules for suspicious agent execution patterns.

Reader-friendly checklist: is this “free Claude Code”?

- Can you run Claude locally now? No. You still need Claude model weights and Anthropic’s hosted inference; neither leaked.

- Can you strip safeguards? You can study how safeguards are wired, which helps red-teamers, but production Claude safety lives in weights + policies you do not have.

- Is there sensitive customer data? Anthropic says no customer or key material was inside the source map.

- Is Anthropic’s reputation hurt? Yes - supply-chain trust took a hit - but capability control remains intact.

FAQ

Still have questions?

Short answers you can drop into exec updates or security tickets.

The bigger picture

This leak is a window into three truths the AI industry does not like to discuss:

Code quality is overrated - A “vibe coded” codebase is powering one of the fastest-growing AI products in history. Product-market fit and user experience beat architectural elegance every time.

The real moat is the model - Claude Code’s source is now public knowledge, but competitors cannot replicate the experience without Anthropic’s models. The harness is commodity; the AI is the product.

Copyright norms cut both ways - AI companies have spent years arguing that learning from copyrighted content is fair use. They cannot be surprised when others apply that logic to their outputs.

Closing

The leak hands the world a blueprint, not a working product. If you are a builder, treat it as a reminder to harden your own release pipelines. If you are an enterprise buyer, update your SBOM and publishing checks. And if you are tempted to grab the code from a mirror - do not. The parts you want most never left Anthropic’s servers.

The official github.com/anthropics/claude-code repository remains active with 104k stars and 16.4k forks. That is where the legitimate skills, tutorials, and examples live. Everything else is legal risk without the actual value.

Sources: Axios reporting on the March 31 leak, TechCrunch on the DMCA overreach, build.ms analysis of code quality observations, GitHub trending data, and community discussions on Hacker News and Twitter/X.

Written by Umesh Malik

AI Engineer & Software Developer. Building GenAI applications, LLM-powered products, and scalable systems.

Related Articles

AI & Security

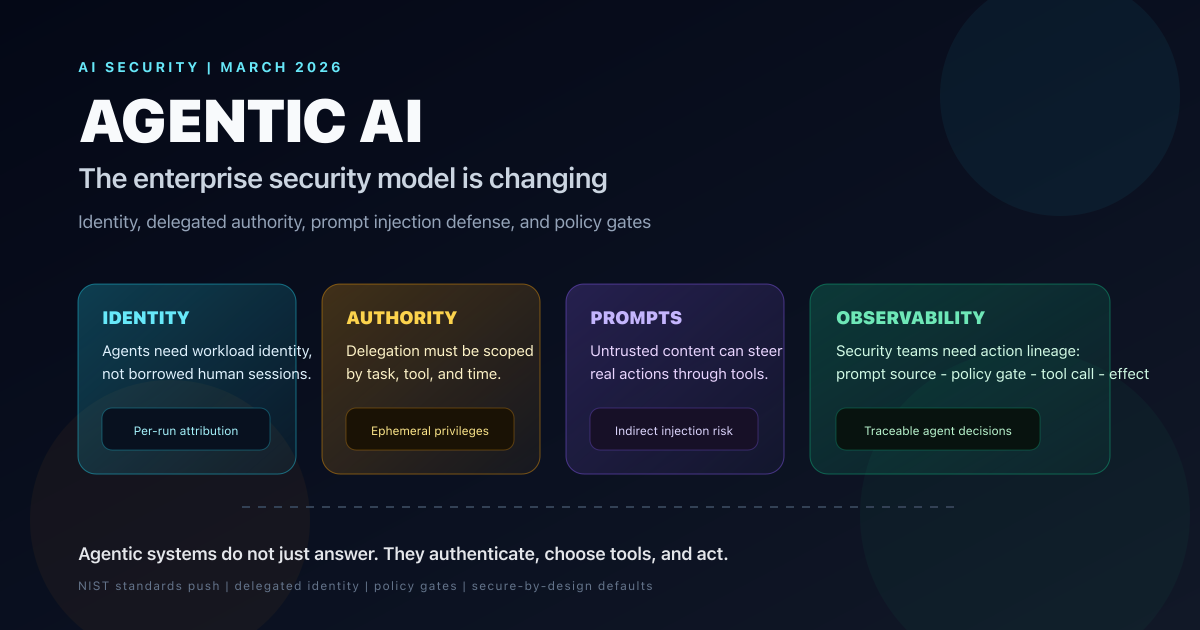

Agentic AI Is Changing the Security Model for Enterprise Systems: What CISOs Need to Fix Now

Forbes surfaced the shift, but the deeper story is that agentic AI breaks static enterprise trust models. Here is how identity, delegated authority, prompt injection defense, and tool-level policy need to change in 2026.

AI & Security

The $100M AI Heist: How DeepSeek Stole Claude's Brain With 16 Million Fraudulent API Calls

Anthropic exposes industrial-scale IP theft by DeepSeek, Moonshot, and MiniMax—16 million exchanges, 24,000 fake accounts, and a national security threat that changes everything about AI security. This is the full forensic breakdown of the largest AI model theft operation ever documented.

AI & Developer Experience

Anthropic Code Review for Claude Code: Multi-Agent PR Reviews, Pricing, Setup, and Limits

Anthropic launched Code Review for Claude Code on March 9, 2026. This guide explains how the multi-agent PR reviewer works, what it costs, who gets access, how REVIEW.md and CLAUDE.md customization works, and where it beats static analyzers.