Agentic AI Is Changing the Security Model for Enterprise Systems: What CISOs Need to Fix Now

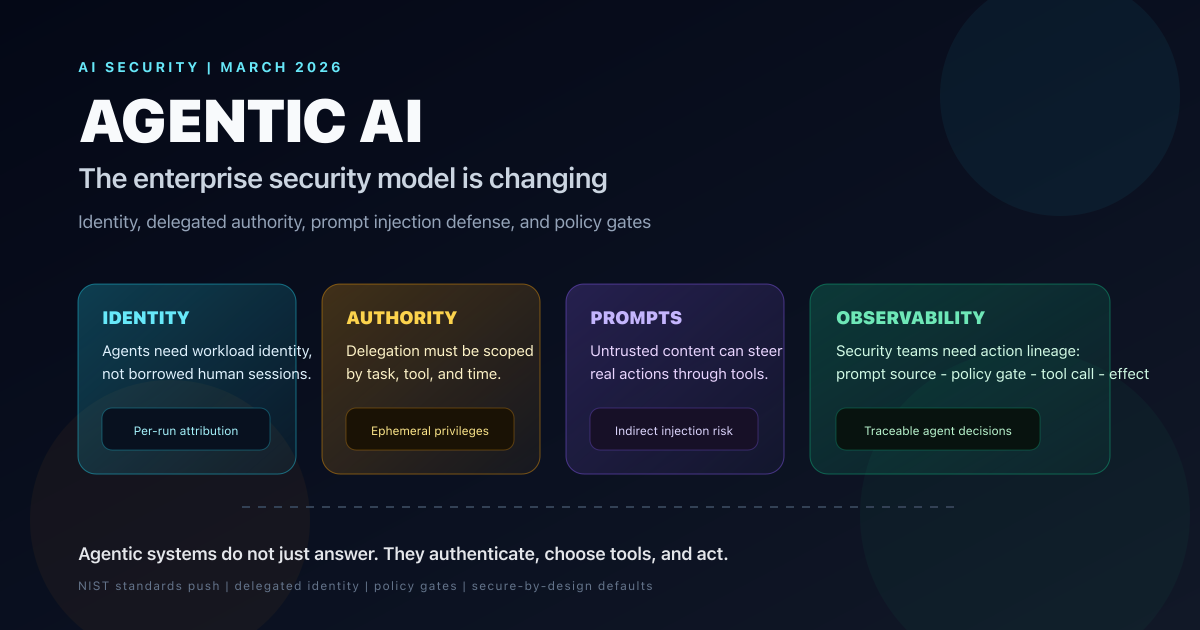

Forbes surfaced the shift, but the deeper story is that agentic AI breaks static enterprise trust models. Here is how identity, delegated authority, prompt injection defense, and tool-level policy need to change in 2026.

On March 7, 2026, Heather Wishart-Smith wrote in Forbes that agentic AI is changing the security model for enterprise systems. That framing is correct, but it still sounds smaller than the actual shift.

Traditional enterprise security assumed a simple chain of control: a human authenticates, software executes deterministic logic, and security teams wrap the environment with IAM, network controls, logging, and endpoint policy. Agentic AI breaks that chain. Now the system that reads instructions is also the system that selects tools, interprets ambiguous data, and decides which action to take next.

That turns security from a question of “who logged in?” into a harder question: what authority was delegated, to which agent, for which task, under what constraints, and how do you prove what happened afterward?

The timing matters. NIST opened its RFI on securing AI agent systems on January 12, 2026, published an NCCoE concept paper on software and AI agent identity and authorization on February 5, and launched the AI Agent Standards Initiative on February 17. This is no longer a niche AppSec debate. It is becoming a standards, identity, and governance problem for every enterprise that wants agents touching production systems, customer data, code, or money.

The short answer: agentic AI forces enterprises to redesign security around delegated identity, constrained authority, tool-level policy enforcement, and continuous observability. If your current plan is “put SSO in front of the app and log the API calls,” you are under-scoping the problem.

AGENTIC AI SECURITY SHIFT

Jan 12

NIST RFI

agent security opens

Feb 17

Standards initiative

CAISI launch

Apr 2

Identity paper comments

NCCoE deadline

4

Control layers

identity, policy, isolation, audit

READ THE SECTION THAT MATCHES YOUR JOB

The same story lands differently depending on whether you own policy, identity, or product delivery.

Use this to skip to what matters

CISOS

You need the strategic security model shift

Start with the control-model comparison and the identity section. That is where the operating assumption changes.

Focus on

OutcomeYou will leave with a cleaner mental model for board, budget, and architecture conversations.

IAM + PLATFORM

You need the technical control plane

Focus on workload identity, scoped credentials, policy gates, and audit lineage.

Focus on

OutcomeYou will know what needs to change before agents get broad write access.

AI PRODUCT TEAMS

You need practical shipping constraints

Jump to prompt injection, excessive agency, and the rollout checklist before expanding autonomy.

Focus on

OutcomeYou will know which product shortcuts create unacceptable enterprise risk.

TL;DR

- Forbes is right: agentic AI changes enterprise security because agents act, not just answer.

- NIST is already treating AI agent security as a distinct category, with an RFI that closed on March 9, 2026 and a separate identity-and-authorization comment window that stays open through April 2, 2026.

- The biggest shift is from user authentication to delegated authority management. Agents need their own identities, not borrowed human sessions and shared service keys.

- Prompt injection is now an action-security problem, not just a model-safety problem. In tool-using systems, hostile content can influence real operations.

- OWASP’s framing of prompt injection and excessive agency maps directly to enterprise risk: unauthorized tool use, data exfiltration, workflow manipulation, and harmful automated actions.

- The minimum viable control stack is agent identity, short-lived scoped credentials, policy gates on every tool call, sandboxing, approval workflows, and full action lineage.

- Enterprises should not stop pilot programs, but they should stop giving agents broad standing privileges.

The Real Break: Agents Are Actors, Not Just Interfaces

The Forbes piece matters because it pulls a technical issue into the mainstream enterprise conversation: the security challenge is not simply “AI can make mistakes.” It is that AI agents now sit in the middle of identity, applications, documents, APIs, workflows, and action loops.

That matches how NIST defines the problem. In its January 12 RFI, NIST describes AI agent systems as systems capable of planning and taking autonomous actions that impact real-world systems or environments. That definition matters because it moves the discussion from model quality into systems security.

Once an LLM can:

- read a customer email

- decide which SaaS application to open

- retrieve data from internal systems

- choose a tool

- trigger the next action

the security boundary is no longer the chatbot interface. The boundary is the full decision-and-action path.

That is why security leaders quoted by Forbes keep landing on the same conclusion from different directions. Some focus on identity and delegated credentials. Others focus on visibility across layers. Others focus on secure-by-design defaults. They are all describing the same structural change: agents compress decision-making and execution into one runtime surface.

What Breaks First In Enterprise Deployments

The first failures are usually not spectacular. They are architectural shortcuts that feel harmless in a pilot and become dangerous once the agent gets real permissions.

THE FIRST SIX FAILURE MODES

These are the control gaps that appear fastest when enterprises move from chatbot demos to tool-using agents.

IDENTITY

Agents borrow human sessions

Teams often let agents reuse a user token, browser session, or shared service credential instead of issuing agent-specific identity.

- Weak attribution

- No clean revocation path

- Privilege bleed across tasks

AUTHORITY

Delegated credentials are too broad

Long-lived API keys and standing privileges make every hallucination or injection incident more damaging.

- No task scoping

- No time-bounded access

- Blast radius expands silently

PROMPTS

External content becomes an attack surface

Emails, tickets, files, websites, and tool output can all carry instructions that steer model behavior.

- Indirect prompt injection

- Memory poisoning

- Tool poisoning

TOOLS

One prompt fans out into many systems

An apparently simple request can chain into CRM, ERP, Slack, ticketing, GitHub, or cloud actions in the background.

- Cross-system action chains

- Hard-to-see side effects

- Compounded privilege risk

OBSERVABILITY

Teams log outputs, not action lineage

Traditional logs capture API calls and status codes, but not which context source influenced the decision or which policy blocked it.

- Thin audit trail

- Harder forensics

- Weak accountability

GOVERNANCE

Approval boundaries are underspecified

Without explicit human gates, agents drift from assistive behavior into autonomous authority by accident.

- Shadow autonomy

- No non-repudiation

- Unsafe operational shortcuts

Old Enterprise Security vs Agentic Enterprise Security

The control model changes more than most vendor pitches admit.

| Dimension | Traditional enterprise model | Agentic enterprise model |

|---|---|---|

| Primary actor | Human user plus deterministic software | Human, software agent, model, and tools acting together |

| Trust anchor | User authentication and device posture | Agent identity, delegated authority, task context, and tool scope |

| Main attack paths | Credential theft, phishing, endpoint compromise | Prompt injection, excessive agency, tool poisoning, token misuse |

| Blast radius | Usually bounded by role and app session | Multiplies across connected tools and chained actions |

| Monitoring | App logs, network telemetry, IAM events | Needs prompt source, tool trace, policy decisions, and action lineage |

| Governance rhythm | Periodic access review and app hardening | Continuous policy evaluation over every delegated action |

This is why “zero trust for agents” is not enough as a slogan. Zero trust helps with connection and access assumptions. But agents introduce a separate authority problem: the system deciding what to do is also the system executing the action path.

Identity Becomes the New Control Plane

This is where the NIST and NCCoE work is most useful.

The February 5 NCCoE concept paper is not really about chatbots. It is about applying identity standards and best practices to software and AI agents, with explicit attention to identification, authorization, auditing, non-repudiation, and controls that mitigate prompt injection. That is the right frame.

If an agent can deploy code, move data, open tickets, approve discounts, change configs, or trigger payments, then the enterprise needs answers to four questions on every run:

- Which human or business process delegated this task?

- Which exact identity is the agent using right now?

- Which tools and data sources are in scope for this task only?

- What evidence exists for every decision and action taken?

STATIC TRUST VS ACTIVE DELEGATION

The old model assumed software executed code paths humans already defined. The new model must assume agents continuously interpret, choose, and act.

OLD MODEL

Authenticate once, trust the application

A user signs in, the app inherits the session, and RBAC plus logs do most of the work.

- Standing privileges are common

- Service accounts often outlive the task that needed them

- Attribution usually stops at the app layer

This breaks once a model starts selecting tools and shaping multi-step actions.

NEW MODEL

Delegate authority per task, per tool, per time window

The agent needs its own verifiable identity and policy-constrained access derived from a human or workflow owner.

- Short-lived credentials tied to the task

- Least-privilege scope per tool call

- Strong lineage from human intent to agent action to system effect

This is closer to workload identity plus policy orchestration than to classic app session management.

The practical implication is blunt: borrowed browser cookies, copied API keys, and shared service accounts are the wrong abstraction for agentic systems. Enterprises need agent-specific workload identity, ephemeral credentials, and policy checks that evaluate intent, data sensitivity, action type, and destination before execution.

The Minimum Viable Control Stack

You do not need a perfect reference architecture before starting. You do need a minimum viable control stack before expanding autonomy.

WHAT THE CONTROL STACK SHOULD INCLUDE

Think of this as the minimum baseline before agents get meaningful write privileges in enterprise environments.

WORKLOAD IDENTITY

A real identity for every agent run

Each agent session should be attributable to a unique runtime identity linked back to a user, workflow, or service owner.

- No shared accounts

- Clear revocation

- Better attribution

EPHEMERAL AUTHORITY

Short-lived, scoped delegation

Credentials should expire quickly and grant only the exact permissions required for the current task.

- Task-bounded

- Time-bounded

- Resource-bounded

POLICY ENFORCEMENT

A gate on every tool call

The policy layer should evaluate action risk before the agent touches code, data, money, or production controls.

- Action-aware

- Data-aware

- Environment-aware

UNTRUSTED INPUT HANDLING

Treat documents like hostile influence surfaces

Web pages, PDFs, emails, tickets, and tool output should be labeled and filtered as untrusted model input.

- Prompt injection scanning

- Content labeling

- Source-aware reasoning

ISOLATION

Sandbox memory, tools, and third-party servers

The wider the tool ecosystem, the more you need execution boundaries and explicit trust tiers.

- Sandbox MCP connections

- Separate high-risk tools

- Constrain lateral movement

AUDIT + RESPONSE

Record action lineage and keep a kill switch

You need enough traceability to investigate decisions and enough control to stop a misbehaving agent fast.

- Full trace logs

- Policy-deny records

- Rapid disable path

Why Prompt Injection Is Now an Enterprise Security Event

OWASP’s LLM01 prompt injection guidance and LLM06 excessive agency guidance are useful here because they translate abstract AI risk into operational failure modes.

Prompt injection matters more in agentic systems because the model is no longer just generating text. It is selecting tools, invoking extensions, and influencing downstream actions. A malicious instruction hidden in a help ticket, a shared document, a website, a tool description, or a retrieved memory item can steer the model away from its intended workflow.

Excessive agency is the multiplier. If the agent has too much standing power, then even a small steering failure can become:

- an unauthorized data retrieval

- a ticket closure that hides a real incident

- a repo change that should have required approval

- a financial or operational action triggered under false context

This is also where CISA’s secure-by-design posture becomes more relevant, not less. The right enterprise question is not “Can customers configure enough controls after deployment?” It is “Did the vendor design the product so risky autonomy is constrained by default?” In agentic systems, safe defaults, included logging, and strong identity primitives are product requirements, not premium extras.

The 2026 Timeline Explains Why This Topic Suddenly Matters

Security teams are not imagining a future problem. The standards and policy machinery is already moving.

-

January 12, 2026

NIST opens RFI on securing AI agent systems

The agency asks for concrete input on unique threats, mitigations, measurements, and deployment controls for agents.

-

February 5, 2026

NCCoE publishes identity and authorization concept paper

The discussion shifts from generic AI governance to identification, authorization, auditing, non-repudiation, and prompt-injection controls for agents.

-

February 17, 2026

NIST launches the AI Agent Standards Initiative

CAISI formalizes a standards track around secure, interoperable, and trusted agent systems.

-

March 7, 2026

Forbes elevates the issue for enterprise leaders

The conversation reaches a broader executive audience: agentic AI is changing the enterprise security model.

-

March 9, 2026

RFI comment deadline arrives

The initial federal input window on AI agent security closes, showing how quickly the field is operationalizing.

-

April 2, 2026

Identity paper comments close

The enterprise identity and authorization discussion for agents stays open longer, which is telling in itself.

What CISOs and Platform Teams Should Do In the Next 30 Days

The right move is not to freeze every pilot. It is to stop pretending that agent access is just another SaaS integration.

30-DAY AGENTIC AI SECURITY CHECKLIST

Track progress as you work through the list

0%

0/9 done

The Strategic Read For Enterprise Leaders

The biggest mistake executives can make is treating agent security as a faster version of chatbot governance. It is not.

Chatbot governance mostly asked whether answers were safe, accurate, and compliant. Agent security asks whether a system with probabilistic reasoning and delegated power can be trusted to operate inside real workflows without causing unacceptable damage.

That is a different class of question. It requires different controls. And it lands in a different budget line: not just model safety or AI governance, but IAM, AppSec, platform engineering, procurement, and incident response.

FAQ

Questions readers usually have

These are the practical questions teams ask once they understand that agentic AI is an authority problem, not just a model problem.

Final Take

The Forbes article should be read as a warning shot, not a trend piece.

Agentic AI is not simply adding another application to the enterprise stack. It is introducing a new actor that can interpret instructions, chain tools, and exercise delegated power in environments built for humans and deterministic software.

That is why the security model changes. Identity must become more granular. Authority must become shorter-lived and more explicit. Policy must sit in front of tool use. Observability must capture action lineage, not just final outputs. And product teams have to stop treating safe autonomy as an optional layer they will add later.

The enterprise winners in 2026 will not be the companies that give agents the most power the fastest. They will be the companies that build the cleanest authority model around them.

Sources

- Forbes: Agentic AI Is Changing The Security Model For Enterprise Systems (Mar 7, 2026)

- NIST: CAISI Issues Request for Information About Securing AI Agent Systems (Jan 12, 2026)

- NIST: AI Agent Standards Initiative (created Feb 17, 2026)

- NCCoE: New Concept Paper on Identity and Authority of Software Agents (Feb 5, 2026)

- OWASP GenAI: LLM01 Prompt Injection

- OWASP GenAI: LLM06 Excessive Agency

- CISA: Secure by Design

Related Reading

Written by Umesh Malik

AI Engineer & Software Developer. Building GenAI applications, LLM-powered products, and scalable systems.

Related Articles

AI & Security

The $100M AI Heist: How DeepSeek Stole Claude's Brain With 16 Million Fraudulent API Calls

Anthropic exposes industrial-scale IP theft by DeepSeek, Moonshot, and MiniMax—16 million exchanges, 24,000 fake accounts, and a national security threat that changes everything about AI security. This is the full forensic breakdown of the largest AI model theft operation ever documented.

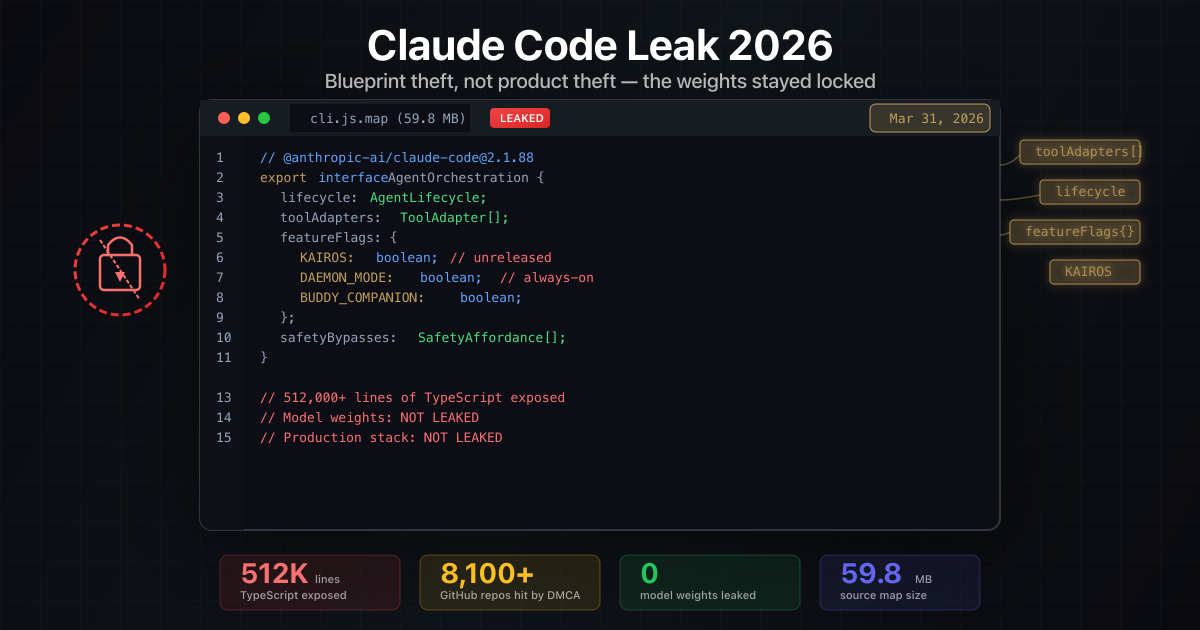

AI & Security

Claude Code Leak 2026: What Escaped, What Stayed Locked, and the Copyright Irony No One Is Talking About

A clear, action-focused breakdown of the March 31, 2026 Claude Code source-map leak: what was exposed, what was not, Anthropic DMCA sweep that hit their own repos, clean-room reimplementations, and the uncomfortable copyright parallels.

AI & Enterprise

Nvidia's OpenClaw Strategy: Why Jensen Huang Says Every Company Needs an AI Agent Plan

At GTC 2026, Jensen Huang said every company needs an OpenClaw strategy. Here is what it means and what U.S. teams should do next.