Claude Code Review by Anthropic: Multi-Agent PR Reviews, Pricing, Setup Guide, and Limits (2026)

How Claude Code Review works: multi-agent PR reviewer, pricing, REVIEW.md customization, and where it beats static analyzers. Complete guide for 2026.

Anthropic launched Code Review for Claude Code on March 9, 2026, and the short answer is simple: this is a managed pull-request reviewer that runs multiple Claude agents in parallel, verifies their findings, and posts ranked review comments back into GitHub.

That sounds incremental until you look at the actual problem it is trying to solve. Modern teams are no longer bottlenecked only by code generation. They are bottlenecked by review quality. AI can now produce diffs faster than most teams can evaluate them, and classic review tooling still mostly catches syntax, style, and narrow static patterns. Anthropic is betting that the next productivity jump comes from moving code review up from rule enforcement to repository-aware reasoning.

If you searched for Anthropic Code Review, Claude Code review pricing, or how Claude Code code review works, this is the practical breakdown: what is confirmed, what it costs, how to configure it, and where it fits in a real engineering workflow.

ANTHROPIC CODE REVIEW AT A GLANCE

54%

PRs with substantive comments

up from 16% internally

<1%

Incorrect findings

as marked by engineers

$15-$25

Typical review cost

depends on diff size and tokens

~20 min

Typical runtime

research preview latency

WHO SHOULD READ WHAT

This launch matters for different reasons depending on whether you own developer productivity, security, or day-to-day pull requests.

Use this to skip to what matters

ENGINEERING LEADS

You want to know whether this is worth paying for

Start with the TL;DR, then jump to pricing, access constraints, and the linter comparison.

Focus on

OutcomeYou will know whether Code Review is a useful review layer or an expensive novelty.

PLATFORM + SECURITY

You care about governance and rollout risk

Focus on the managed-service constraints, Zero Data Retention caveat, and customization files.

Focus on

OutcomeYou will leave with a cleaner pilot plan and fewer surprises at approval time.

INDIVIDUAL DEVELOPERS

You want to know what changes in the PR loop

Read how reviews are triggered, what kinds of issues the agents catch, and how to re-run reviews after fixes.

Focus on

OutcomeYou will know how this fits beside tests, CI, and human review instead of replacing them.

TL;DR

- Anthropic launched Code Review on March 9, 2026 as a new Claude Code capability for automated pull-request review.

- Anthropic says the system runs multiple specialized agents in parallel, then verifies and ranks their findings before posting comments.

- The core pitch is logic-aware review, not style policing. Anthropic says the system can reason over changed files, adjacent code, and similar past bugs in the repository.

- In Anthropic’s internal data, 54% of pull requests now receive substantive comments, up from 16% with older approaches.

- Anthropic says engineers marked less than 1% of findings as incorrect, which is unusually low for automated review tooling.

- As of March 10, 2026, Code Review is in research preview for Claude Team and Claude Enterprise customers.

- Anthropic documents a typical cost of $15 to $25 per review and typical completion time of about 20 minutes.

- Teams can customize the reviewer with

REVIEW.mdfor review criteria andCLAUDE.mdfor project context. - Anthropic says Code Review is not available for organizations with Zero Data Retention enabled.

- If you need a self-hosted path or are outside this managed GitHub flow, Anthropic points teams to GitHub Actions or GitLab CI/CD integrations instead.

What Anthropic Code Review Actually Is

The cleanest description is this:

Anthropic Code Review is a managed GitHub pull-request reviewer inside Claude Code that uses several Claude agents to inspect a PR from different angles, validate the findings, and surface the highest-value comments.

That last part matters. Plenty of review bots can already leave comments. What Anthropic is trying to do differently is move beyond isolated line comments and reason about:

- whether a change breaks assumptions in another file

- whether a new parameter or state path is handled everywhere it needs to be

- whether a fix silently introduces a downstream regression

- whether the diff violates team-specific review rules that are too nuanced for ESLint or a static policy engine

Anthropic’s launch post gives a concrete example: a change added a new parameter in one file, but the corresponding state and logic were not updated elsewhere. The system flagged the bug in the untouched adjacent code path. That is the category that makes this interesting.

WHAT THE PRODUCT IS OPTIMIZED FOR

The official materials are strongest when read as a systems design story, not a generic 'AI writes comments' story.

PARALLEL REVIEW

Multiple agents inspect the same PR at once

Anthropic says specialized agents review different dimensions of the pull request in parallel, then the system verifies and ranks the findings.

- Parallel analysis instead of one monolithic pass

- Higher-value comments get prioritized

- Designed to reduce noisy bot output

REPO CONTEXT

The review is not limited to changed lines

Anthropic says the system reasons over surrounding code, project context, and similar historical bugs when evaluating a diff.

- Adjacent-file regressions

- Cross-cutting state and parameter handling

- Repository-aware bug patterns

CUSTOM RULES

Teams can encode internal standards without retraining a model

The docs support custom review criteria in REVIEW.md and broader architecture notes in CLAUDE.md.

- Business-critical invariants

- Security and reliability priorities

- Signal over formatting noise

MANAGED SERVICE

Anthropic is optimizing for convenience, not local control

Admins install a GitHub app and get a hosted workflow, but that also means clear governance constraints.

- Research preview only

- No Zero Data Retention support at launch

- Use CI integrations for self-hosted paths

How Anthropic Code Review Works

The review lifecycle is more important than the headline. Once you understand the flow, you can see exactly where this helps and where it does not.

REVIEW FLOW

The official docs describe a managed GitHub review loop with automatic triggers, manual re-runs, and configurable review instructions.

TRIGGER

A PR opens or a developer asks for a review

Code Review can start automatically when a pull request is opened, reopened, or marked ready for review. Developers can also trigger it manually with @claude review after pushing new commits.

- Automatic review on key PR events

- @claude review for fresh changes

- @claude review all for a full pass on an already-reviewed PR

Why this step existsYou can keep the default automation but still explicitly request a deeper pass when a diff changes significantly.

ANALYSIS

Specialized agents examine the PR in parallel

Anthropic says several Claude agents inspect different dimensions of the pull request at the same time rather than forcing one long sequential read.

- Changed files and adjacent code

- Project context and architecture notes

- Historical bug patterns and custom review criteria

Why this step existsThe system is designed to reason about downstream effects instead of only matching static patterns on the changed lines.

VERIFICATION

Findings are checked and ranked before posting

Anthropic says the system verifies findings and ranks them by severity before surfacing comments in GitHub.

- Priority over comment spam

- Reduced low-signal findings

- More actionable review output

Why this step existsThis is the layer that separates the product from a naive 'comment on everything' bot.

FOLLOW-THROUGH

Humans still own merge decisions and fixes

Developers review the comments, patch the code, and re-run the reviewer if needed. The tool is a reviewer, not an approval authority.

- Use it beside tests and CI

- Keep human code owners in the loop

- Measure accepted vs ignored comments

Why this step existsThe strongest teams will treat this as an additional reasoning layer, not as permission to stop reviewing code.

Why This Is More Than Another Linter

Most existing automation helps in one of two ways:

- it enforces deterministic rules very cheaply

- it blocks clearly bad patterns before humans ever look at the code

That is useful, but it is not the same as reasoning through intent. Anthropic’s bet is that AI-generated diffs create too many review situations where the failure is not “bad syntax” but “locally plausible code that breaks a larger system assumption.”

| Capability | Linters and static analyzers | Anthropic Code Review |

|---|---|---|

| Syntax and formatting | Excellent, deterministic, cheap | Possible, but not the main value |

| Cross-file logic regressions | Often weak unless hard-coded explicitly | Core product pitch |

| Repository and bug-history context | Usually none | Anthropic says yes |

| Custom team review rules | Rigid, rule-authoring heavy | Configurable through REVIEW.md and CLAUDE.md |

| Latency and cost | Seconds and usually near-zero marginal cost | About 20 minutes and $15-$25 per review |

| Final merge authority | None | None |

The obvious tradeoff is that Anthropic’s approach is slower and more expensive than static tooling. But that is the wrong comparison if the real alternative is a human reviewer missing a subtle cross-file bug in a large AI-generated diff.

Pricing, Availability, and Setup

As of March 10, 2026, Anthropic documents the following:

- Availability: research preview for Claude Team and Claude Enterprise

- Cost: usually $15 to $25 per review

- Speed: usually around 20 minutes

- Setup path: admin installs the Anthropic GitHub app, connects repositories, and enables review on the branches you want covered

WHAT YOU NEED VS WHAT CAN BLOCK YOU

This is the part to show platform teams before anyone promises a same-week rollout.

ROLLOUT PATH

The hosted setup is straightforward

Anthropic is optimizing for fast adoption in GitHub-centric teams rather than custom infrastructure work.

- Claude Team or Enterprise account

- Admin installs the Anthropic GitHub app

- Select repos and protected branches

- Add REVIEW.md and CLAUDE.md for higher-signal reviews

This is a managed Anthropic service, so the operational burden is low.

CONSTRAINTS

The governance boundaries are real

The convenience comes with limits that some organizations will reject immediately.

- Not available with Zero Data Retention enabled

- Anthropic-hosted service rather than self-hosted execution

- Roughly 20-minute review times do not fit every CI gate

- Teams outside the managed GitHub flow should use CI integrations instead

If you need tighter control, Anthropic directs teams to GitHub Actions or GitLab CI/CD based workflows.

How To Configure Custom Checks Without Turning It Into Noise

The most important operational detail in the docs is not the launch metric. It is the customization model.

Anthropic exposes two simple files:

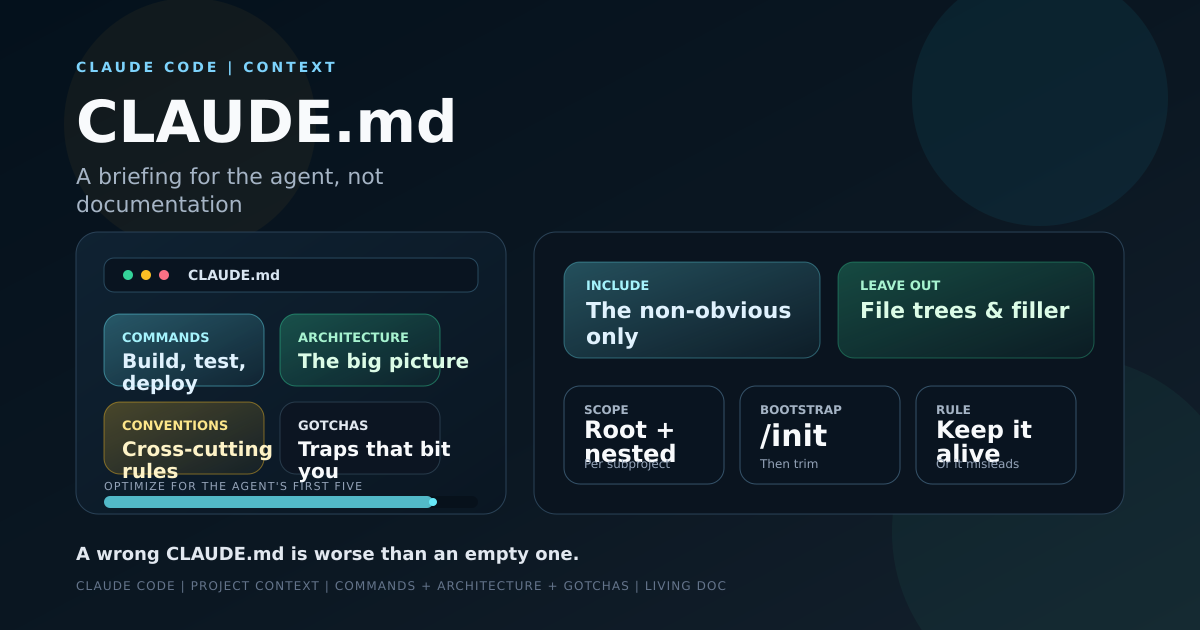

REVIEW.mdfor pull-request review instructionsCLAUDE.mdfor broader repository context, architecture, and project conventions

That is the right separation. CLAUDE.md tells the agents how your system is shaped. REVIEW.md tells them what to care about during review.

Example REVIEW.md:

# REVIEW.md

Prioritize comments about:

- authorization regressions across admin and customer paths

- idempotency in webhook handlers

- missing transaction boundaries on billing writes

- async jobs that can double-send emails, refunds, or notifications

Deprioritize:

- formatting and import order

- naming-only comments without runtime risk

- style nits already covered by lintingExample CLAUDE.md:

# CLAUDE.md

Architecture notes:

- packages/auth owns all role and permission checks

- apps/api is the only service allowed to mutate billing state

- apps/worker replays webhook events and must remain idempotent

- do not write directly to Subscription rows outside BillingServiceThis is where teams can get real leverage. If you do not encode your business invariants, the model falls back to generic review behavior. If you encode too much low-value policy, you recreate the comment spam problem you were trying to avoid.

FASTEST HIGH-SIGNAL PILOT CHECKLIST

Track progress as you work through the list

0%

0/7 done

Where Anthropic Code Review Fits Best

The ideal use case is not every repository on day one.

It is strongest when:

- pull requests are large, AI-assisted, or cross-cutting

- human reviewers routinely miss multi-file regressions

- your team has real architectural invariants that are hard to encode in static rules

- you are willing to pay for review quality, not just for code generation speed

It is weaker when:

- you need ultra-fast deterministic gating in seconds

- your organization requires Zero Data Retention today

- your diffs are small and most review comments are already stylistic

- you expect the tool to replace code owners, tests, or threat modeling

There is a broader product thesis here too: Anthropic is clearly trying to own more of the full coding loop, not just code generation. That makes sense. If models keep writing more code, the value shifts toward tools that can verify, criticize, and constrain that code before it reaches production.

Anthropic is also expanding the security side of that workflow with Claude Code Security, which makes this launch look less like a one-off bot feature and more like the start of a layered AI review stack.

FAQ

Questions readers usually have

The repeat questions are mostly about access, cost, customization, and whether this actually replaces existing review practices.

Final Take

Anthropic Code Review is not interesting because it leaves AI comments on a PR. Plenty of tools can do that. It is interesting because Anthropic is aiming at a harder problem: can an AI reviewer reason across a real codebase well enough to catch bugs that deterministic tooling and rushed humans both miss?

The early signals are strong enough to take seriously. The internal comment-rate jump from 16% to 54%, the claimed sub-1% incorrect rate, and the docs around REVIEW.md and CLAUDE.md all suggest this is a real attempt to make review agentic rather than cosmetic.

But the tradeoffs are equally real: this is a managed service, it is not compatible with Zero Data Retention, it costs real money per review, and it takes real time to run.

So the right framing is not “Will Anthropic replace code review?” The right framing is: for high-risk PRs, does paying for a slower, reasoning-heavy AI reviewer catch enough bugs to justify the latency and cost?

For teams already generating code with AI, that is exactly the next question that matters.

Sources

- Anthropic: Introducing Code Review

- Anthropic Docs: Setting up Code Review

- Claude Code Docs: How Claude Code works

- Anthropic Solutions: Claude Code Security

- TechCrunch: Anthropic launches code review tool to check flood of AI-generated code

- VentureBeat: Anthropic rolls out Code Review for Claude Code

Explore more: AI Coding Agents — Agentic AI for Developers

About the Author

Software engineer writing about AI, Claude Code, LLMs, OpenAI, Anthropic, and developer tooling. 5+ years building production systems at Expedia Group, Tekion, and BYJU'S.

Related Articles

AI & Developer Experience

How to Write a CLAUDE.md That Actually Helps

How to write a CLAUDE.md that actually helps Claude Code: what to include, what to leave out, a real structure, and how to stop it from rotting.

AI & Developer Experience

Cursor vs Claude Code vs Copilot (2026): Which AI Coding Tool, for What

Cursor vs Claude Code vs GitHub Copilot in 2026 — how they actually differ in model, workflow, and autonomy, and which to use for what (I use all three).

AI & Developer Experience

How to Build a Production MCP Server (I Added One to My Site)

How to build a production MCP server: a hands-on guide to JSON-RPC, the Streamable HTTP transport, tools, and discovery — from one I shipped on Cloudflare.