OpenAI GPT-5.4 Complete Guide: Benchmarks, Use Cases, Pricing, API, and GPT-5.4 Pro Comparison

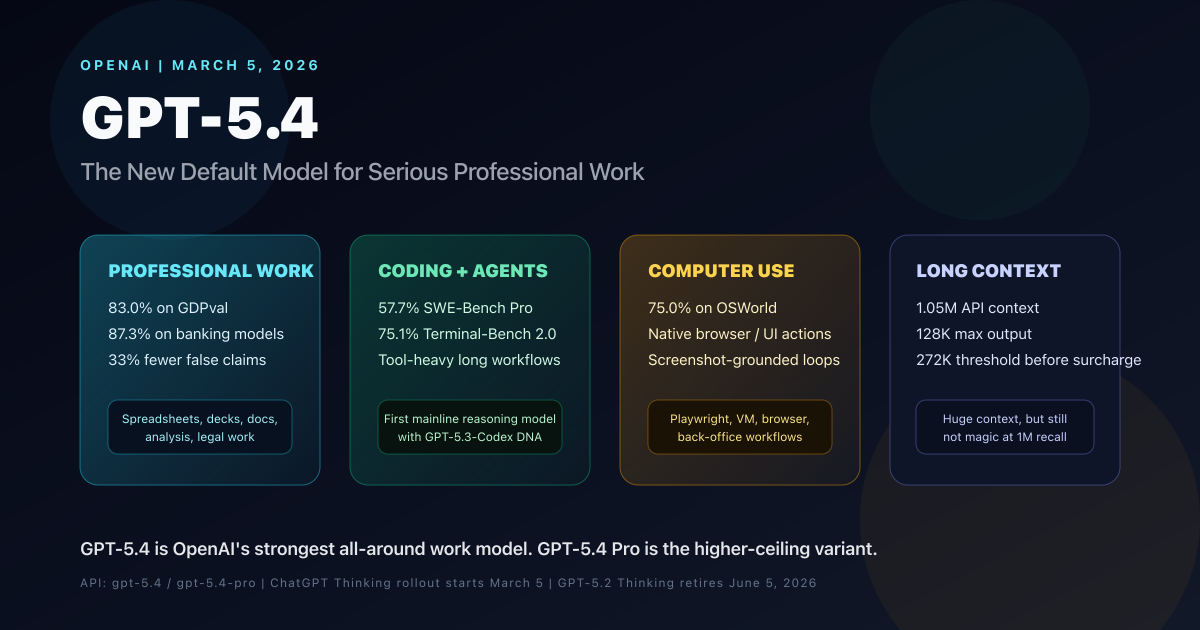

OpenAI GPT-5.4 is the new mainline reasoning model for professional work. This complete guide covers benchmarks, use cases, pricing, API details, long-context behavior, computer use, tool search, GPT-5.4 Pro, and how it compares with GPT-5.2 and GPT-5.3-Codex.

OpenAI released GPT-5.4 on March 5, 2026, and this is the first GPT release in a while that feels less like a narrow benchmark bump and more like a model-line reset.

The reason is simple: GPT-5.4 is the first mainline OpenAI reasoning model that combines frontier professional-work quality, frontier coding from GPT-5.3-Codex, native computer use, and 1.05M-context API support in the same default model. That matters a lot if your real workload is not “one perfect answer in one shot,” but messy multi-step work spread across documents, spreadsheets, web apps, codebases, and tool chains.

The short answer: GPT-5.4 is now OpenAI’s best all-around model for serious professional work. If you need one model that can research, write, analyze, code, use tools, drive browsers, and survive large contexts, this is the new default. If you need the highest ceiling and can tolerate much higher latency and price, GPT-5.4 Pro is the step-up.

GPT-5.4 AT A GLANCE

83.0%

GDPval

professional work score

75.0%

OSWorld

computer-use success rate

1.05M

Context Window

API support

$2.50 / $15

Input / Output

per 1M tokens

WHO SHOULD READ WHAT

This guide covers several different buying and implementation questions. Start with the path that matches your actual decision.

Use this to skip to what matters

PRODUCT TEAMS

You need the default model choice

Start with the professional-work benchmarks, then jump to the API playbook and pricing section.

Focus on

OutcomeYou will know whether GPT-5.4 is the default model for your product and where Pro stops being worth it.

CODING + AGENTS

You care about coding, tools, and browser workflows

Focus on the coding benchmarks, computer-use section, and the model selection map.

Focus on

OutcomeYou will see where GPT-5.4 beats GPT-5.3-Codex, and where a specialist coding model still deserves a test.

ENTERPRISE EVALUATORS

You are worried about tradeoffs, cost, and rollout risk

Read the long-context caveats, Pro feature gaps, and the migration checklist before deciding anything.

Focus on

OutcomeYou will leave with a cleaner rollout plan instead of over-reading the headline benchmarks.

TL;DR

- GPT-5.4 launched on March 5, 2026 as OpenAI’s new mainline reasoning model for professional work.

- OpenAI says it is the first mainline reasoning model to absorb the frontier coding capabilities of GPT-5.3-Codex.

- On GDPval, GPT-5.4 reaches 83.0%, up from 70.9% for GPT-5.2.

- On OpenAI’s internal investment banking modeling tasks, GPT-5.4 scores 87.3% versus 68.4% for GPT-5.2.

- On SWE-Bench Pro, GPT-5.4 posts 57.7%, slightly ahead of GPT-5.3-Codex at 56.8%.

- On OSWorld-Verified, GPT-5.4 hits 75.0%, above GPT-5.2 at 47.3% and even above the human baseline OpenAI cites at 72.4%.

- The API model supports a 1,050,000 token context window and 128,000 max output tokens, but benchmark results show quality still drops sharply at the far end of that window.

- GPT-5.4 costs more per token than GPT-5.2:

$2.50input,$0.25cached input, and$15.00output per 1M tokens. - GPT-5.4 Pro costs much more at

$30input and$180output per 1M tokens, and is for the hardest tasks only. - In ChatGPT, GPT-5.4 Thinking replaces GPT-5.2 Thinking for Plus, Team, and Pro users. GPT-5.2 Thinking retires on June 5, 2026.

What GPT-5.4 Actually Is

OpenAI’s own positioning is unusually clear here.

GPT-5.4 is:

- the new default frontier model for complex professional work

- the first mainline reasoning model that inherits GPT-5.3-Codex-level coding ambition

- OpenAI’s first general-purpose model with native computer use

- a model with 1.05M context in the API and experimental 1M-context support in Codex

- a model that supports the full modern agent stack: web search, file search, image generation, code interpreter, hosted shell, apply patch, skills, computer use, MCP, and tool search

That last point is the real story.

Previous OpenAI model choices were easier to split into buckets:

- use the reasoning model for analysis

- use the coding model for coding

- use special tools for browser or desktop automation

GPT-5.4 makes those boundaries much blurrier.

-

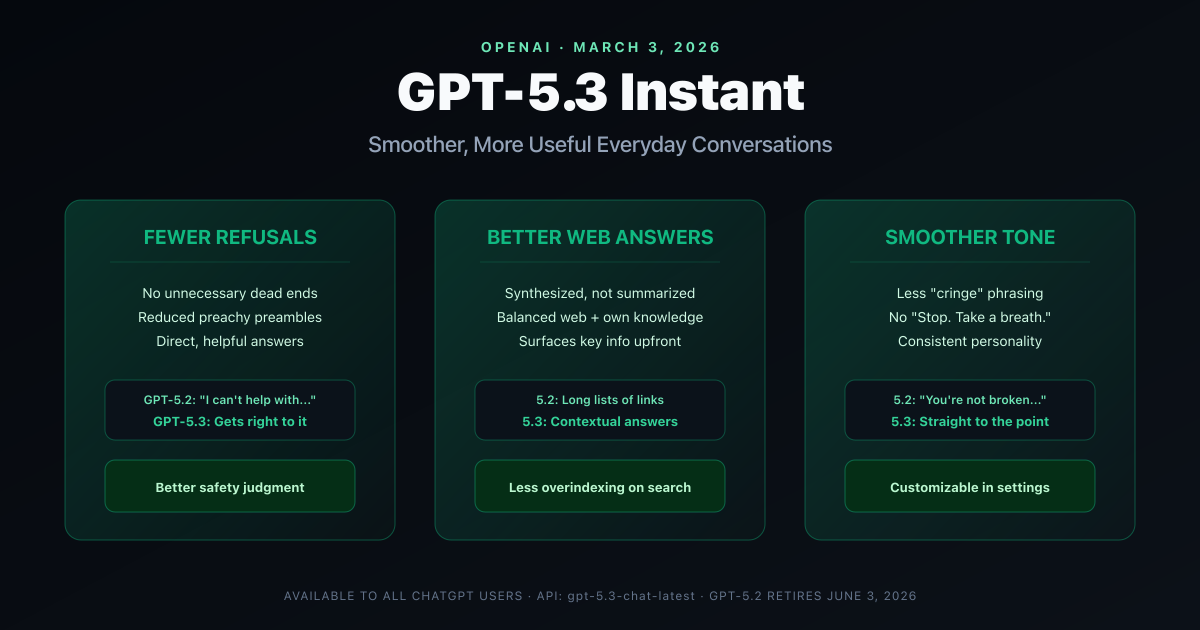

March 3, 2026

GPT-5.3 Instant ships

OpenAI updates the everyday ChatGPT experience with fewer refusals, smoother tone, and better web synthesis.

-

March 5, 2026

GPT-5.4 and GPT-5.4 Pro launch

The mainline reasoning model absorbs GPT-5.3-Codex coding strengths and adds native computer use plus 1.05M API context.

-

June 5, 2026

GPT-5.2 Thinking retires in ChatGPT

GPT-5.2 remains in the Legacy Models picker for paid users for three months, then leaves the main ChatGPT flow.

1. Professional Work Is the Real Headline

Most model launches still center on coding, math, or abstract reasoning. GPT-5.4 is different. OpenAI’s release materials repeatedly frame it around real office work: spreadsheets, presentations, documents, legal analysis, and research-heavy deliverables.

That is not marketing fluff. The public numbers back it up.

| Eval | GPT-5.4 | GPT-5.2 |

|---|---|---|

| GDPval | 83.0% | 70.9% |

| Investment banking modeling tasks | 87.3% | 68.4% |

| OfficeQA | 68.1% | 63.1% |

| User-flagged factual error set | 33% fewer false claims | Baseline |

| Full responses with any error | 18% less likely | Baseline |

This is where GPT-5.4 becomes more than a “better chatbot.”

It is now credible for:

- board update outlines and narrative memos

- spreadsheet modeling and sanity-checking

- presentation draft generation with stronger visual variety

- long document comparison and synthesis

- contract-heavy diligence work

- finance, strategy, and operations research that needs both writing and structured reasoning

OpenAI also says human raters preferred GPT-5.4-generated presentations 68.0% of the time over GPT-5.2 due to stronger aesthetics, more visual variety, and better use of image generation.

That matters because a lot of “knowledge work” is not just about factual recall. It is about producing work products that look usable.

2. GPT-5.4 Turns Coding Into a First-Class Default Capability

The coding section is where this launch gets more subtle.

OpenAI says GPT-5.4 combines the coding strengths of GPT-5.3-Codex with leading knowledge-work and computer-use capabilities, especially for longer-running tasks where the model can use tools, iterate, and keep pushing with less manual intervention.

The official comparison table supports that claim, but with nuance.

| Coding Eval | GPT-5.4 | GPT-5.3-Codex | GPT-5.2 |

|---|---|---|---|

| SWE-Bench Pro (Public) | 57.7% | 56.8% | 55.6% |

| Terminal-Bench 2.0 | 75.1% | 77.3% | 62.2% |

| Context window | 1.05M | 400K | 400K |

| Primary positioning | Generalist pro work + coding | Specialized agentic coding | Previous frontier work model |

Here is the practical read:

- GPT-5.4 is now the best default if your coding work is mixed with analysis, docs, browser steps, and tool orchestration.

- GPT-5.3-Codex remains very relevant if your workload is mostly pure coding inside a Codex-style environment.

- GPT-5.2 is now mostly a legacy comparison target.

That second point is my inference from OpenAI’s own tables. GPT-5.4 edges GPT-5.3-Codex on SWE-Bench Pro, but GPT-5.3-Codex still leads on Terminal-Bench 2.0. So the cleaner way to think about this is:

- GPT-5.4 = strongest all-around engineering model

- GPT-5.3-Codex = still a very sharp specialist for terminal-heavy coding loops

3. Native Computer Use Is One of the Biggest Practical Upgrades

This is the part many people will underrate at first.

OpenAI calls GPT-5.4 its first general-purpose model with native computer-use capabilities. That is a big shift because it means the mainline reasoning model can now operate on screenshots, return UI actions, and participate directly in browser or desktop workflows.

The benchmark jump is not small.

COMPUTER USE AND VISION

75.0%

OSWorld-Verified

GPT-5.4 success rate

47.3%

GPT-5.2 on OSWorld

previous baseline

81.2%

MMMU Pro

no-tools vision score

82.1%

MMMU Pro

with tools

OpenAI’s docs describe three practical ways to use this capability:

- a built-in

computertool loop for screenshot-based UI actions - a custom browser or VM harness with Playwright, Selenium, VNC, or MCP

- a code-execution harness where the model writes and runs scripts for UI work

That opens up a long list of real product use cases:

- browser QA and acceptance testing

- reproducing UI bugs from screenshots or step lists

- support workflows across admin panels and dashboards

- CRM or ERP task automation that still needs human supervision

- accessibility and regression walkthroughs

- research agents that move between tabs, forms, downloads, and screenshots

The built-in loop is also straightforward. OpenAI’s computer-use docs describe it as:

- send a task with the

computertool enabled - inspect the returned

computer_call - execute the returned actions in order

- send back an updated screenshot as

computer_call_output - repeat until the model stops asking for computer actions

Minimal computer-use example

import OpenAI from 'openai';

const client = new OpenAI();

const response = await client.responses.create({

model: 'gpt-5.4',

tools: [{ type: 'computer' }],

input:

'Check whether the Filters panel is open. If it is not open, click Show filters. Then type penguin in the search box. Use the computer tool for UI interaction.'

});

console.log(response.output);4. Tool Use and MCP Workloads Are Where GPT-5.4 Starts Feeling Like an Agent Model

GPT-5.4 is not just stronger at single-model reasoning. It is stronger at deciding what tools to call and when.

OpenAI’s official evals show:

- 82.7% on BrowseComp for GPT-5.4

- 89.3% on BrowseComp for GPT-5.4 Pro

- 67.2% on MCP Atlas for GPT-5.4

- 54.6% on Toolathlon for GPT-5.4

- 98.9% on Tau2-bench Telecom for GPT-5.4

That matters for teams building agents across big internal tool surfaces.

The most interesting supporting feature here is tool search.

According to OpenAI’s tool-search docs, tool search lets the model dynamically search for and load tools into the context only when needed. The point is not just convenience. It can reduce token usage, preserve the model cache better, and avoid dumping a huge tool catalog into the prompt up front.

That is especially useful when you have:

- large internal tool catalogs

- namespaced function sets

- tenant-specific tool inventories

- MCP servers with many functions

- agent systems where most tools are irrelevant on most turns

Minimal tool-search pattern

const response = await client.responses.create({

model: 'gpt-5.4',

input: 'List open orders for customer CUST-12345.',

tools: [crmNamespace, { type: 'tool_search' }],

parallel_tool_calls: false

});In OpenAI’s docs, the deferred tools live inside a namespace or MCP server and are loaded only when the model decides it needs them.

That is a major design improvement for enterprise agents because it moves you away from the old pattern of shoving 50 JSON schemas into every request.

5. The 1M Context Window Is Real, but It Is Not Magic

This is one of the most important practical caveats in the whole release.

Yes, GPT-5.4 supports a 1,050,000 token context window in the API, with 128,000 max output tokens. OpenAI also says GPT-5.4 in Codex has experimental support for the 1M window, and requests above the standard 272K context threshold incur higher usage rates.

But you should not read “1M context” as “perfect 1M recall.”

OpenAI’s own long-context evals show a very clear pattern:

| Range | GPT-5.4 score | Interpretation |

|---|---|---|

| MRCR v2 4K to 8K | 97.3% | Excellent short-context retrieval |

| MRCR v2 64K to 128K | 86.0% | Still strong at large prompt sizes |

| MRCR v2 128K to 256K | 79.3% | Usable, but quality is already slipping |

| MRCR v2 256K to 512K | 57.5% | Very large-context retrieval gets fragile |

| MRCR v2 512K to 1M | 36.6% | Do not assume reliable needle retrieval at the far edge |

LONG-CONTEXT REALITY CHECK

The 1M window is useful, but the practical question is where it helps and where teams start over-trusting it.

USE IT WHEN

The full window creates real product value

GPT-5.4 benefits from giant context when the job is broad synthesis, planning, or maintaining large working memory, not perfect far-edge recall.

- Giant codebase snapshots for planning and refactor scoping

- Full diligence rooms or long policy bundles for first-pass synthesis

- Many prior conversation turns plus tools plus working memory

- Large multi-document comparison tasks where partial recall is still valuable

DO NOT ASSUME

A huge window does not replace retrieval discipline

OpenAI’s own evals show retrieval gets much weaker at the far edge, and large sessions add hidden budget and pricing complexity.

- You can skip retrieval, chunking, ranking, or tool-based search

- Needle retrieval stays reliable near the 512K to 1M range

- Reasoning tokens are free just because they are not visible

- Sessions above 272K input avoid pricing surcharges

Another important API detail from OpenAI’s reasoning docs: reasoning tokens are not visible in the raw response, but they still take up space inside the context window and are billed as output tokens. OpenAI recommends leaving at least 25,000 tokens of headroom for reasoning and outputs while you are learning how your prompts behave.

That is an easy thing to miss, and it will absolutely affect real cost and truncation behavior.

6. Steerability Finally Feels Productive Instead of Cosmetic

OpenAI also improved the actual ChatGPT interaction pattern around GPT-5.4 Thinking.

For longer and more complex prompts, the model now gives a preamble describing how it plans to approach the task. Users can also redirect it mid-response without fully restarting.

This sounds small, but it is a real usability upgrade for messy work:

- “keep the thesis but make the deck more investor-facing”

- “same structure, less legal language”

- “stop summarizing and switch into recommendation mode”

- “use the spreadsheet, not the PDF, as the source of truth”

That is the kind of interaction pattern that makes a reasoning model more practical for long professional workflows.

Every Practical Use Case Where GPT-5.4 Makes Sense

If you want the simplest high-level rule, it is this:

GPT-5.4 is strongest when the task spans multiple modes of work at once.

Not just writing. Not just coding. Not just tool calling. Not just browser control.

All of them together.

USE-CASE MAP

GPT-5.4 is most useful when one workflow has to combine reasoning, writing, code, tools, and browser interaction instead of splitting those jobs across separate systems.

PRODUCT + STRATEGY

Founder and product workflows

Strong fit for research-heavy outputs that still need narrative quality and executive readability.

- Market landscape memos with current web evidence

- Board updates with both narrative and data structure

- Investor or customer-facing presentation drafts

- Product requirement comparison across long documents

- Competitive teardown reports mixing research, charts, and positioning

FINANCE + OPS

Operational analysis

OpenAI is clearly positioning GPT-5.4 toward spreadsheet, modeling, and decision-support work.

- Spreadsheet model creation and review

- Scenario analysis with assumptions tables and commentary

- Monthly business review decks

- Procurement summaries across vendor documents

- Policy reconciliation, invoice explanation, and exception analysis

LEGAL + POLICY

Document-heavy professional work

Useful when the job is mostly reading, structuring, comparing, and explaining large text sets.

- Clause extraction across long contracts

- Issue spotting in transaction documents

- Comparison matrices across agreements or policy versions

- First-pass diligence summaries with evidence grouping

- Structured research memos that need both caution and depth

ENGINEERING

Mixed engineering workflows

Best when code is only one layer of the job and the rest involves docs, shell, browser, and planning.

- Repo migration plans across large codebases

- Debugging workflows that combine code, logs, shell output, and docs

- Architecture review memos plus implementation patches

- UI bug reproduction using screenshots and browser actions

- Internal tool agents that need code, docs, browser, and shell in one loop

SUPPORT + BACK OFFICE

Workflow automation with supervision

Computer use makes GPT-5.4 much more relevant for internal operations, but only with explicit confirmation gates.

- Dashboard navigation and account triage

- CRM updates across multiple internal systems

- Support escalation summaries with screenshots and account history

- Refund, policy, or telecom workflow agents with human confirmation gates

- Cross-tool workflows where the model needs to discover the right action first

AGENT BUILDERS

Long-running agents

This is where GPT-5.4 starts feeling like a platform model, not just a chat model.

- MCP-heavy orchestration with lots of searchable tools

- Browser or VM agents that need screenshot-grounded actions

- Document-heavy agents that also need shell or code execution

- Long-running workflows where the model must keep state across many steps

- Human-in-the-loop agents that need strong intermediate planning, not just final answers

GPT-5.4 vs GPT-5.4 Pro vs GPT-5.3-Codex vs GPT-5.2

If you are choosing inside the current OpenAI lineup, this is the comparison that matters most.

| Dimension | GPT-5.4 | GPT-5.4 Pro | GPT-5.3-Codex | GPT-5.2 |

|---|---|---|---|---|

| Primary role | Best all-around model for professional work | Highest ceiling for hardest tasks | Specialist for agentic coding | Previous frontier work model |

| Context window | 1.05M | 1.05M | 400K | 400K |

| Pricing | $2.50 in / $15 out | $30 in / $180 out | $1.75 in / $14 out | $1.75 in / $14 out |

| Structured outputs | Supported | Not supported | Supported | Supported |

| Distillation | Supported | Not supported | Not supported | Supported |

| Code interpreter / hosted shell | Supported | Not supported | Not the main selling point | Not highlighted like 5.4 |

| Best pick when | You need one model for mixed workflows | Accuracy ceiling matters more than speed or cost | Your workflow is primarily coding inside Codex-like loops | You need a temporary legacy comparison |

The simplest decision rule

- Choose GPT-5.4 if you want the new default and your work spans multiple task types.

- Choose GPT-5.4 Pro if the task is hard enough that extra minutes and extra money are justified.

- Choose GPT-5.3-Codex if you are optimizing mostly for coding-agent behavior.

- Keep GPT-5.2 only for regression testing, temporary fallbacks, or side-by-side migration checks.

How To Use GPT-5.4 Well in the API

The model is strong, but the implementation details still matter.

API PLAYBOOK

These six decisions matter most when you move GPT-5.4 from experimentation into production workflows.

SURFACE

Default to the Responses API

That is where OpenAI is concentrating reasoning, tool use, computer use, and multi-step orchestration.

- Use it as the primary integration path for new work

- Prefer it over older chat-shaped wrappers when building agents

REASONING

Choose effort deliberately

GPT-5.4 supports `none`, `low`, `medium`, `high`, and `xhigh`. GPT-5.4 Pro starts at `medium`.

- Use `none` or `low` for extraction and simple transforms

- Use `medium` for most production tasks

- Use `high` or `xhigh` for planning, multi-doc analysis, and agentic tool loops

LATENCY

Use background mode for hard jobs

OpenAI recommends background mode when the model may work for several minutes, especially with GPT-5.4 Pro.

- Poll queued and in-progress responses

- Do not assume Zero Data Retention compatibility for background mode

TOOLS

Keep large tool catalogs lazy

Tool search is better than stuffing every possible schema into every request.

- Preserves context budget

- Improves cache behavior

- Fits large MCP and enterprise namespaces

BUDGET

Track reasoning-token headroom

Reasoning tokens are billed as output and still consume context budget even if you never see them directly.

- Leave 25K or more headroom while tuning prompts

- Watch incomplete answers caused by hidden reasoning spend

RELIABILITY

Pin snapshots in production

Use the rolling alias while evaluating, then move to dated snapshots for stable releases.

- Example: `gpt-5.4-2026-03-05`

- Avoid silent behavior drift in critical workflows

Use background mode for long tasks

OpenAI explicitly recommends background mode for GPT-5.4 Pro because hard tasks can take several minutes.

import OpenAI from 'openai';

const client = new OpenAI();

let resp = await client.responses.create({

model: 'gpt-5.4-pro',

input: 'Analyze these diligence memos and produce a ranked acquisition recommendation.',

background: true

});

while (resp.status === 'queued' || resp.status === 'in_progress') {

await new Promise((resolve) => setTimeout(resolve, 2000));

resp = await client.responses.retrieve(resp.id);

}

console.log(resp.output_text);One detail that matters for enterprise teams: OpenAI’s background-mode docs say background mode stores response data for roughly 10 minutes to enable polling, so it is not Zero Data Retention compatible.

Pricing, Rollout, and Migration Details

Here are the exact release mechanics that matter.

Availability

- In the API, GPT-5.4 is available as

gpt-5.4. - In the API, GPT-5.4 Pro is available as

gpt-5.4-pro. - In ChatGPT, GPT-5.4 Thinking started rolling out on March 5, 2026 to Plus, Team, and Pro users.

- Enterprise and Edu can enable early access through admin settings.

- GPT-5.4 Pro is available to Pro and Enterprise plans.

- GPT-5.2 Thinking remains for paid users in the Legacy Models section until June 5, 2026.

Pricing

For GPT-5.4:

$2.50input / 1M tokens$0.25cached input / 1M tokens$15.00output / 1M tokens

For GPT-5.4 Pro:

$30.00input / 1M tokens$180.00output / 1M tokens

OpenAI also says:

- Batch and Flex pricing are available at half the standard rate

- Priority processing is available at 2x the standard rate

- prompts above 272K input tokens on GPT-5.4 and GPT-5.4 Pro are billed at 2x input and 1.5x output for the full session

- regional processing endpoints add a 10% uplift for GPT-5.4 and GPT-5.4 Pro

GPT-5.4 migration checklist

Track progress as you work through the list

0%

0/6 done

What GPT-5.4 Still Does Not Solve

This release is strong, but teams will make mistakes if they read only the headline and skip the tradeoffs.

1. The knowledge cutoff is still August 31, 2025

GPT-5.4 is better at professional work, but it still needs web search for truly current facts. If you ask it about fast-moving topics without web access, you are still leaning on a pre-September-2025 internal cutoff.

2. 1M context does not remove retrieval discipline

OpenAI’s own MRCR and Graphwalks numbers show that extremely large-context retrieval remains meaningfully weaker than short- and mid-context performance.

3. It is text output only

GPT-5.4 accepts text and image inputs, but outputs text. Audio and video are not supported on the model page.

4. GPT-5.4 Pro is not a universal upgrade

Pro gives you a higher performance ceiling, but it drops some useful platform features:

- no structured outputs

- no distillation

- no code interpreter

- no hosted shell

- no skills

So even though Pro is stronger on some benchmarks, the default GPT-5.4 model may be the better product fit.

5. Computer use still needs product-level safeguards

A model that can click, type, and navigate is powerful. It is also a bigger operational and safety surface. Human confirmation, scope limits, logging, and tool-specific permissions matter more, not less.

6. Safety controls can still create false positives

OpenAI says GPT-5.4 is treated as High cyber capability under its Preparedness Framework, with monitoring, trusted access controls, and asynchronous blocking for certain higher-risk requests on Zero Data Retention surfaces. That is sensible, but it also means some production setups should still expect friction and false positives in higher-risk domains.

FAQ

Questions readers usually have

The repeat questions mostly fall into four buckets: default model choice, coding fit, context-window reality, and platform support.

Final Take

The most important thing to understand about GPT-5.4 is that it is not just “GPT-5.2 but better.”

It is OpenAI’s attempt to collapse several previously separate model choices into one serious default:

- office-work reasoning

- coding

- browser and desktop interaction

- tool-heavy orchestration

- large-context analysis

That is a more important shift than a single benchmark number.

If you build products where users need actual work done, not just polished chat responses, GPT-5.4 is the new model to evaluate first. If your task is expensive enough that every extra point of accuracy matters, evaluate GPT-5.4 Pro too. But do it with clean eyes: measure cost, latency, long-context failure modes, structured-output needs, and safety friction before you roll it into production.

The labs are now competing on who can finish longer workflows with less supervision.

GPT-5.4 is OpenAI’s strongest evidence yet that this is the product battle that matters.

Sources

Written by Umesh Malik

AI Engineer & Software Developer. Building GenAI applications, LLM-powered products, and scalable systems.

Related Articles

AI & LLMs

OpenAI GPT-5.3 Instant: Fewer Refusals, Better Web Answers, and a Smoother ChatGPT

OpenAI releases GPT-5.3 Instant with 26.8% fewer hallucinations, reduced unnecessary refusals, better web-sourced answers, and a smoother conversational tone. Full breakdown of what changed, why it matters, and what developers need to know.

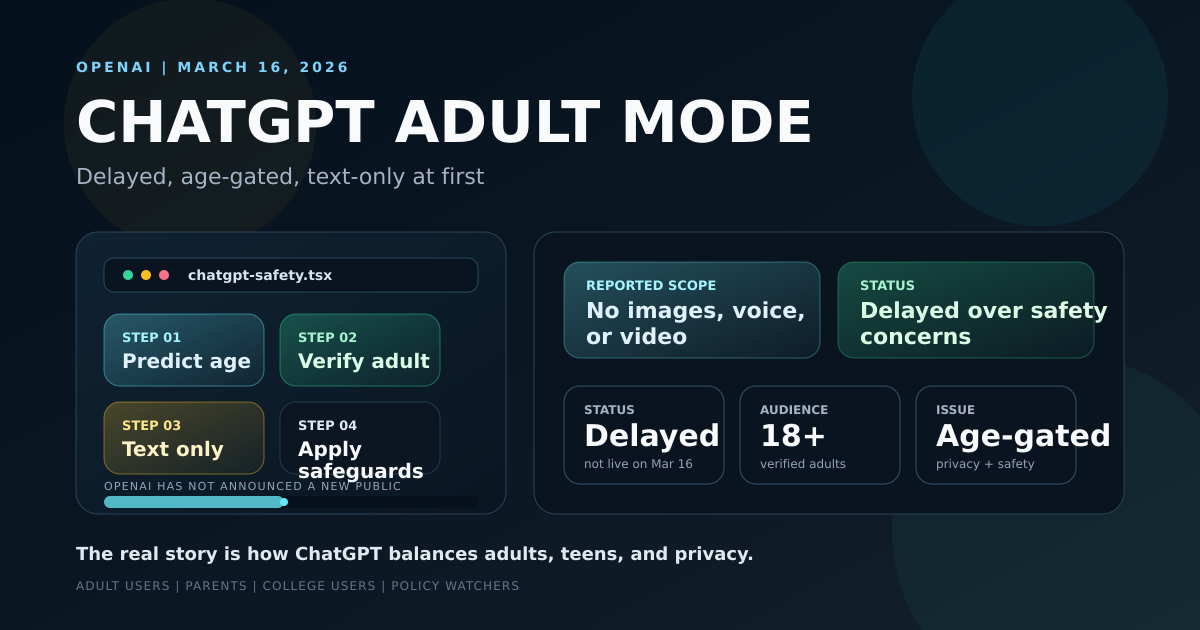

AI & Consumer Tech

ChatGPT "Adult Mode": What OpenAI's Delayed Feature Means for U.S. Adults, Parents, and Privacy

As of March 16, 2026, ChatGPT adult mode is still delayed. This guide covers the reported text-only scope, the delay, age prediction, and why U.S. adults and parents should care.

AI & Education

ChatGPT Interactive Math and Science Visuals: What OpenAI Launched and Why Students, Parents, and Teachers Should Care

OpenAI launched interactive math and science visuals in ChatGPT on March 10, 2026. This guide explains how the new learning modules work, who gets access, which topics they cover, and why U.S. students, parents, and teachers should care.