Nvidia's OpenClaw Strategy: Why Jensen Huang Says Every Company Needs an AI Agent Plan

At GTC 2026, Jensen Huang said every company needs an OpenClaw strategy. Here is what it means and what U.S. teams should do next.

On March 17, 2026, Business Insider reported that Jensen Huang told GTC attendees every company “needs to have an OpenClaw strategy.”

That line sounds like classic conference theater until you translate it into plain English.

Nvidia is saying the next enterprise AI decision is not just which model or which chip you buy. It is whether your company has a plan for AI agents that can actually do work, plus the control layer that keeps those agents from becoming a governance nightmare.

That is the real story.

If you searched for Nvidia OpenClaw strategy, what NemoClaw means, or why Nvidia cares about AI agents now, the short answer is this: Nvidia thinks AI is moving from answering questions to completing tasks, and it wants to own more of that stack than GPUs alone.

NVIDIA OPENCLAW AT A GLANCE

30K+

GTC attendees

official Nvidia preview

190

Countries

represented at GTC 2026

$1T

AI chips

Huang forecast by 2027

Agents

The shift

queries are becoming tasks

START WITH THE PART THAT MATCHES YOUR ROLE

This is not just a chip story. It lands differently depending on whether you own enterprise strategy, platform security, or implementation.

Use this to skip to what matters

CTOS + CIOs

You want the strategic read on why Nvidia is saying this now

Start with the TL;DR, then jump to what OpenClaw means and why this is bigger than another GPU story.

Focus on

OutcomeYou will know why Nvidia wants a role in the agent runtime layer, not only in model training and inference.

PLATFORM + SECURITY

You care about the control plane around agents

Read the NemoClaw section, the stack diagram, and the implementation checklist together.

Focus on

OutcomeYou will leave with a cleaner understanding of why secure execution is the real hard part of enterprise agents.

BUILDERS

You want the concrete product shift, not just the slogan

Jump to the query-vs-task comparison and then the section on what teams should ask before adopting this model.

Focus on

OutcomeYou will know how Nvidia wants builders to think about AI systems that act, not just answer.

TL;DR

- On March 17, 2026, Business Insider reported that Jensen Huang told GTC attendees every company “needs to have an OpenClaw strategy.”

- Nvidia’s own GTC 2026 materials already make the broader context clear: the company is centering agentic AI, AI factories, and physical AI.

- The practical meaning is simple: Nvidia thinks the next AI wave is about agents that complete work, not only chatbots that answer prompts.

- Reporting from Business Insider, The Wall Street Journal, WIRED, and Ars Technica suggests Nvidia is pairing that OpenClaw push with NemoClaw, a more secure enterprise layer around agent execution.

- The strategic bet is that companies will need a plan for where agents act, what tools they can touch, how they are observed, and how they are stopped when something goes wrong.

- That makes this more than another silicon story. It is Nvidia pushing upward from chips into runtime, governance, and enterprise software control.

- For U.S. enterprises, the immediate relevance is strongest in regulated, high-trust workflows where “agentic AI” only matters if it can be deployed safely.

What does Jensen Huang mean by an OpenClaw strategy?

The most useful way to read the line is not as product branding but as a strategic instruction.

An OpenClaw strategy means your company needs a point of view on all of the following:

- which business tasks AI agents should actually perform

- which tools and systems those agents can access

- what approval, monitoring, and rollback model surrounds them

- how you keep agents useful without letting them become an enterprise liability

That is the real leap from chatbot thinking to agent thinking.

In the chatbot era, the core question was: Which model gives us the best answer?

In the agent era, the better question is: Which system can safely take action inside our workflow?

That shift is why the phrase matters.

WHAT NVIDIA IS REALLY SELLING

The slogan matters less than the architecture behind it.

TASK AI

A move from question-answering to work execution

Nvidia is framing the next phase of enterprise AI around systems that do tasks across apps and workflows instead of only generating text.

- Agentic AI

- Multi-step actions

- Background execution

RUNTIME

A layer that coordinates how agents actually act

The more agents touch tools and systems, the more runtime control matters beside the model itself.

- Tool access

- Policy gates

- Execution tracking

SECURITY

A governance answer to the obvious enterprise fear

Nvidia appears to understand that companies will not trust agents widely until there is a stronger control plane around them.

- Sandboxing

- Approval paths

- Safer enterprise rollout

STACK EXPANSION

Nvidia wants a larger share of the agent economy

The company is no longer arguing only for GPUs. It is trying to matter in models, inference, runtime, and enterprise operations.

- More than chips

- More than inference

- Closer to the application layer

Why Nvidia thinks AI is moving from queries to tasks

This is the key learning inside the story.

The old mental model of AI was mostly prompt in, answer out. That model is still useful, but it is increasingly incomplete for enterprise work.

Nvidia is clearly pushing a new framing:

- a user or system assigns a goal

- the agent plans a sequence of actions

- the runtime manages tool access and execution

- the company audits what happened and decides how much autonomy is acceptable

That is a much bigger systems problem than autocomplete or chat.

| AI phase | What users mostly ask for | What companies must manage |

|---|---|---|

| Chatbot era | Answer my question or draft this text | Prompt quality, model output, basic safety |

| Agent era | Handle this task across multiple steps and tools | Execution rights, context, policy, and observability |

| Secure agent era | Do useful work without becoming risky | Approvals, rollback, identity, and enterprise trust |

My inference from the current sources is that Nvidia is trying to make this mental shift feel inevitable. It wants companies to think: if cloud strategy became mandatory, and mobile strategy became mandatory, then agent strategy will become mandatory too.

Where NemoClaw fits, and why it matters

This is where the story becomes more interesting than a slogan.

Reporting from Business Insider, The Wall Street Journal, WIRED, and Ars Technica suggests Nvidia is also advancing NemoClaw, which reads less like a flashy public phrase and more like the answer to the real enterprise question:

How do you let AI agents do useful work without giving them unsafe freedom?

That is the part CIOs, CISOs, and platform teams actually care about.

If OpenClaw is the ambition, NemoClaw appears to be the control layer around that ambition.

THE USEFUL WAY TO READ OPENCLAW AND NEMOCLAW

The names matter less than the role each layer is trying to play.

OPENCLAW

The strategic push toward action-taking AI agents

OpenClaw is best understood as the rallying idea behind enterprise AI systems that move from answering to acting.

- Assign the task, not just the prompt

- Orchestrate multi-step work

- Touch real tools and systems

- Make agent deployment a company-wide strategy question

NEMOCLAW

The guardrails, runtime, and safer enterprise execution layer

NemoClaw matters because enterprises do not really fear model quality first. They fear agent mistakes, policy violations, and uncontrolled system access.

- Control tool permissions

- Add monitoring and approvals

- Reduce runaway behavior risk

- Make enterprise adoption defensible

Why this is bigger than another chip story

If you only read this as Nvidia hype, you will miss the deeper signal.

The official Nvidia GTC framing and the recent reporting point in the same direction: Nvidia is trying to extend its relevance upward through the stack.

-

March 3, 2026

Nvidia previews GTC 2026 around agentic systems and the age of AI

The official GTC preview makes clear that Nvidia wants this conference to be read as a platform story, not only a hardware keynote.

-

March 9, 2026

WIRED reports on Nvidia's broader agent push

Coverage starts connecting Nvidia's enterprise AI pitch to agent software and safer deployment patterns.

-

March 16, 2026

GTC keynote week begins

Nvidia's broader themes around AI factories, physical AI, and agentic systems move into the center of the tech news cycle.

-

March 17, 2026

Business Insider reports Huang's OpenClaw strategy message

The public framing sharpens: every company now needs an explicit plan for AI agents and the systems around them.

The point is not that Nvidia suddenly stopped caring about chips.

The point is that chips alone are no longer enough to define the strategic narrative. The next fight is around how agents are deployed, how safe they are, and which company becomes the trusted layer between the model and the enterprise workflow.

That is a much more durable market position.

Why this matters so much to U.S. companies right now

This is where the audience targeting matters.

For a broad U.S. business audience, the relevance is immediate because the story sits at the overlap of:

- enterprise productivity pressure

- AI automation ambition

- regulatory and legal caution

- security and data-governance reality

My inference from Nvidia’s framing and the surrounding reporting is that the company is speaking directly to the people who approve enterprise AI budgets in the United States:

- CTOs deciding where agents are allowed to act

- CIOs trying to standardize enterprise AI stacks

- CISOs worried about tool abuse and data leakage

- product and ops leaders looking for cost-effective automation that does not blow up governance

That is why this story is more teachable than a normal conference recap. It gives readers a practical new lens:

The real bottleneck in enterprise AI is no longer only intelligence. It is controlled execution.

If your company agrees with Nvidia, the next move is operational

This is the step most teams skip.

They hear the strategic message, buy into the future, and then fail to translate it into a controlled rollout model. If you actually think OpenClaw-style planning matters, the right response is not “launch more agents.” It is to narrow the scope and raise the discipline.

HOW TO RESPOND WITHOUT CREATING AGENT CHAOS

The right first move is a safer operating model, not a louder AI slogan.

START NARROW

Pick one workflow that is valuable but bounded

Do not begin with open-ended enterprise autonomy. Start with a task that is repetitive, observable, and easy to roll back.

- Support triage

- Internal document routing

- Controlled ops workflows

Why this step existsThis gives you signal about real value without exposing the whole company to agent failure modes.

DEFINE IDENTITY

Treat every agent like a system actor with permissions

An agent should not be a magical black box. It needs an identity model, tool boundaries, and clear action rights.

- Explicit tool access

- Scoped data permissions

- Per-agent policy boundaries

Why this step existsThis is the difference between enterprise deployment and demo theater.

KEEP HUMANS IN THE LOOP

Require approval for irreversible or high-risk actions

Autonomy should increase only where the cost of a mistake is low and the rollback path is clear.

- Human review before customer impact

- Approval before sensitive writes

- Pause paths for unexpected behavior

Why this step existsYou get real automation without pretending every task is safe for full autonomy.

MEASURE TRUST

Track execution quality, not just model quality

The useful KPI is no longer just answer accuracy. It is whether the full system acts correctly, safely, and observably.

- Action success rate

- Policy violation rate

- Rollback and intervention frequency

Why this step existsThis is how you learn whether your agent strategy is becoming operationally trustworthy.

What teams should ask before adopting an OpenClaw strategy

If the phrase sticks, a lot of teams will repeat it without translating it into operational questions.

That would be a mistake.

FIVE QUESTIONS THAT MATTER MORE THAN THE SLOGAN

Track progress as you work through the list

0%

0/5 done

That is the difference between having a buzzword and having a strategy.

Final take

The most useful reading of Nvidia’s OpenClaw strategy line is not “Jensen Huang said something catchy at GTC.”

It is this:

Nvidia is trying to convince the market that AI agents are becoming a first-class enterprise planning problem, and that the winning companies will need a secure runtime around those agents, not just a smart model and fast hardware.

That is a meaningful shift.

It tells you where the AI market is going:

- from copilots to agents

- from prompts to tasks

- from model choice to runtime control

- from demo intelligence to enterprise trust

For U.S. companies, that is a timely message because the next wave of AI adoption will be judged less by how impressive the model sounds and more by whether the system can safely act inside real workflows.

That is why this is worth paying attention to.

FAQ

Questions readers usually have

The repeat questions are mostly about what OpenClaw actually means, whether NemoClaw is real, and why Nvidia is pushing this framing now.

Sources

- Business Insider: Nvidia CEO Jensen Huang says every company needs an OpenClaw strategy

- The Wall Street Journal: Nvidia CEO Jensen Huang is building a platform for AI agents

- NVIDIA Newsroom: Jensen Huang and global technology leaders to showcase the age of AI at GTC 2026

- NVIDIA GTC 2026 keynote

- WIRED: Nvidia is planning to launch an open-source AI agent platform

- Ars Technica: Nvidia is reportedly planning its own open-source OpenClaw competitor

- Financial Times: Nvidia chief says AI chip market could reach $1tn by 2027

Written by Umesh Malik

AI Engineer & Software Developer. Building GenAI applications, LLM-powered products, and scalable systems.

Related Articles

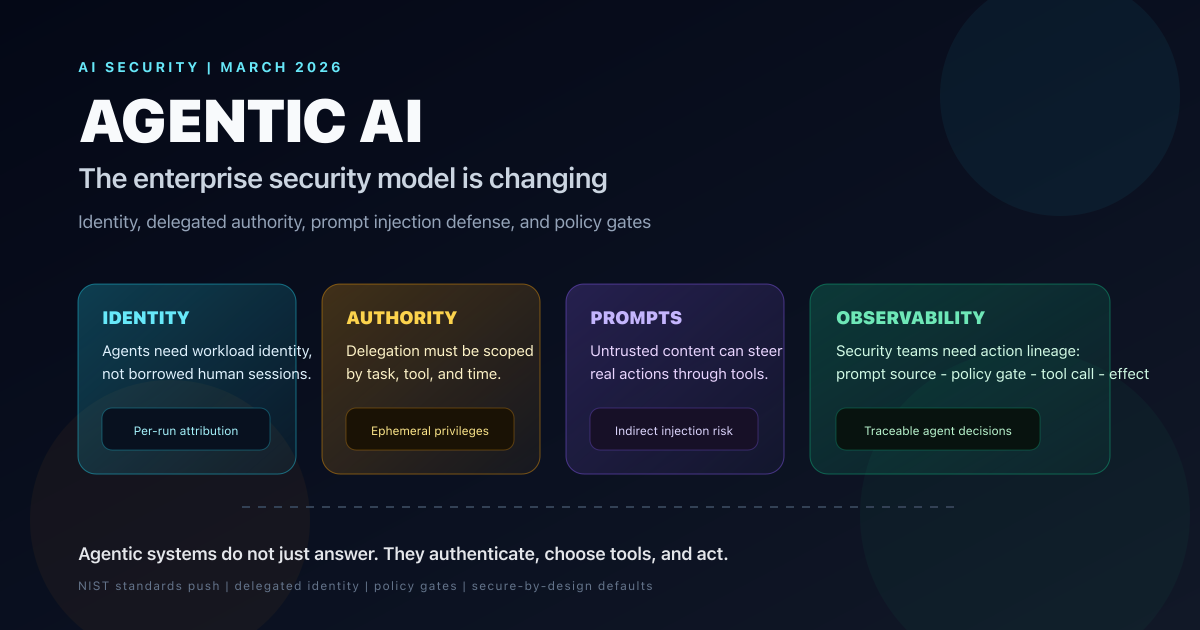

AI & Security

Agentic AI Is Changing the Security Model for Enterprise Systems: What CISOs Need to Fix Now

Forbes surfaced the shift, but the deeper story is that agentic AI breaks static enterprise trust models. Here is how identity, delegated authority, prompt injection defense, and tool-level policy need to change in 2026.

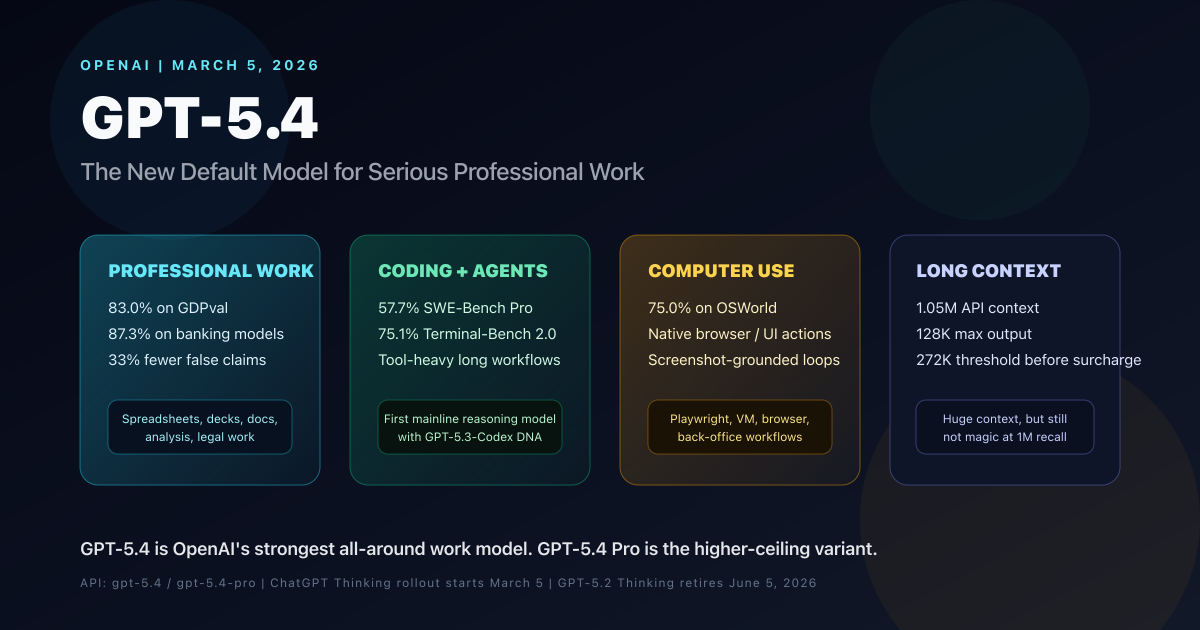

AI & LLMs

OpenAI GPT-5.4 Complete Guide: Benchmarks, Use Cases, Pricing, API, and GPT-5.4 Pro Comparison

OpenAI GPT-5.4 is the new mainline reasoning model for professional work. This complete guide covers benchmarks, use cases, pricing, API details, long-context behavior, computer use, tool search, GPT-5.4 Pro, and how it compares with GPT-5.2 and GPT-5.3-Codex.

AI & Geopolitics

DeepSeek V4 Is About to Test America’s AI Lead: What We Know Before Launch

DeepSeek V4 is expected in early March 2026. Here is what is confirmed, what remains unverified, and how it challenges U.S. AI rivals.